Communications

Evolution of the CU–DU Split Architecture for AI-Native 6G RAN

1. Introduction

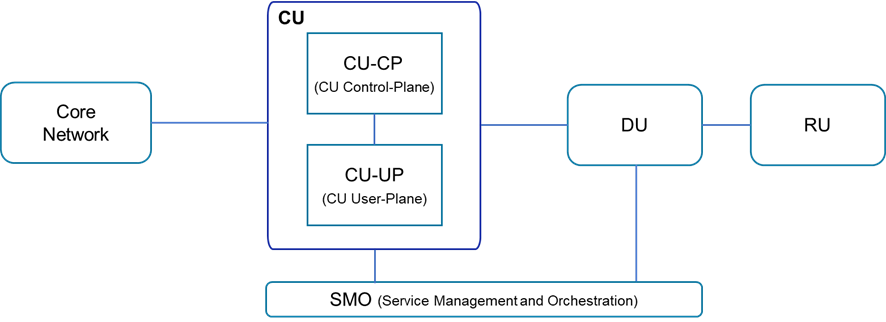

The evolution of the Radio Access Network (RAN) from a monolithic, hardware-dependent system to a flexible, cloud-native architecture represents a transformative shift in wireless communication, paving the way for the next generation of networks. With the advent of 5G, RAN has been split into distinct logical units: Radio Unit (RU), Distributed Unit (DU), and Central Unit (CU), as shown in Figure 1. This modular approach has revolutionized how operators design and manage their infrastructure, offering benefits such as improved scalability, cost efficiency, and support for advanced use cases. The modular architecture mitigates reliance on proprietary hardware by fostering interoperability among multi-vendor components, thereby catalyzing innovation. It also serves as a key enabler for virtualization, allowing operators to deploy software-based network functions across diverse hardware platforms, including generic hardware like commercial off-the-shelf (COTS) servers or cloud infrastructure. This flexibility promotes multi-vendor integration and reduces dependency on single-source solutions, facilitating the deployment of virtualized Open RAN.

Although the CU-DU split architecture has demonstrated substantial benefits within 5G networks [1], commercial deployments have predominantly utilized integrated RAN products. However, as the industry pivots toward the 6G era, which is characterized by AI-native integration, enhanced edge computing, sustainability, and trustworthiness, the strategic value of the CU-DU split architecture becomes increasingly critical in unlocking novel business opportunities. As we transition to 6G, this architectural paradigm is expected to be not only maintained but also expanded into an AI-native framework where intelligence is embedded at every layer of the RAN. Building on the foundation of 5G, this evolution aims to introduce advanced capabilities such as adaptive intelligence for network optimization, enhanced edge security, and seamless evolution from 5G to 6G. This blog provides an in-depth exploration of the CU-DU split architecture, examining its technical foundations, benefits, and performance metrics. In 6G, this architecture is anticipated to unlock unprecedented capabilities, driving a smarter, faster, and more connected future.

Figure 1. Disaggregated RAN architecture for 5G

2. Benefits of CU-DU Split RAN observed in 5G

2.1 Flexible deployment

The CU-DU split RAN architecture provides the versatility to accommodate diverse deployment scenarios. For example, the DU can be positioned near the RU while the CU is deployed at a central site, or both the DU and the CU can be co-located close to the RU. Additionally, the user plane (UP) of the CU can be sliced into multiple CU-UPs which can be distributed across independent locations based on traffic characteristics. For instance, low latency services or local breakout applications can have a CU-UP located near the DU, whereas eMBB (enhanced Mobile Broadband) services can retain CU-UPs at a central site for high capacity. This architecture also supports a scalable design of base stations (BSs), ranging from small configurations (single DU) to larger setups (multiple DUs). Such flexibility supports various environments, including rural areas, dense urban settings, small cells, and mmWave deployments. Furthermore, the standardized CU–DU split architecture is also valuable for the flexible deployment of Non-Terrestrial Networks (NTN), such as deploying the DU on a satellite with the CU on the ground.

2.2 Scalability with CU Resource Pooling

One of the key advantages of the CU–DU split architecture is the enhanced scalability of computing resources at the CU unit. By deploying CUs in edge data centers, operators can pool and flexibly share compute resources across multiple cell sites, eliminating the need for static allocations at each individual site. During high-traffic periods, such as in congested areas or peak hours, additional CU compute resources can be dynamically provisioned from a centralized pool in the edge data center. This elasticity enables operators to efficiently manage per-site traffic fluctuations in a cost-effective manner, eliminating the traditional inefficiency of over-provisioned Layer 3 (L3) resources, including Radio Resource Control (RRC), which manages connection setup and mobility, and Packet Data Convergence Protocol (PDCP), which handles data transfer, encryption, and header compression [2], at each BS site. As 5G-Advanced and 6G services are expected to introduce diverse applications with dynamic and unpredictable workloads, such as XR (Extended Reality), AI-inference, and sensing, the CUs’ pooling capability becomes increasingly vital. This allows operators to handle peak loads without hardware upgrades, ensuring efficient resource utilization in 6G networks.

2.3 L3 operation benefits from centralized CU

2.3.1 Seamless Mobility Anchoring

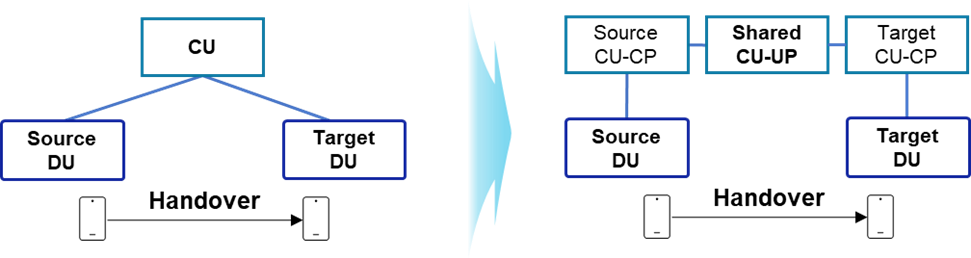

One benefit of the CU–DU split architecture, carried forward from 5G, is its ability to ensure stable and seamless mobility anchoring across distributed DUs. In integrated BS deployments, every inter-BS handover involves the UE’s anchor point change between BSs, which introduces signaling overhead, latency, and potential service interruptions. In contrast, in a split architecture, the CU serves as a unified control plane (CP) and UP anchor for multiple DUs within its domain. When a user traverses coverage areas served by different DUs under the same CU, the mobility anchor remains unchanged, as shown in Figure 2. This eliminates the need for context transfer and the full re-establishment of the connection during handovers, resulting in faster mobility procedures, reduced signaling complexity, and improved session continuity.

Figure 2. Seamless mobility anchoring by CU for 5G and 6G

2.3.2. Multi DU coordination

The CU–DU split architecture streamlines the central coordination of radio functions across multiple DUs, enabling more efficient and unified network management. In the split CU-DU architecture, each DU handles low-layer processing and local packet scheduling, while the CU retains visibility and control over the broader RAN domain. By aggregating cell- and UE-level information from multiple DUs, such as load distribution, mobility trends, and quality-of-service (QoS) dynamics, the CU can perform more effective Radio Resource Management (RRM) operations compared to per-site, per-DU optimization. Multi-DU coordination by the CU improves RRM features such as load balancing, handover optimization, and traffic steering.

2.3.3. Optimized support of RRC Inactive mode

The RRC Inactive mode enables a device to relinquish its active wireless connection while maintaining its context within the BS. During this mode, the BS stores the device context, allowing the device to quickly resume its connection without undergoing a full reconnection process when transitioning between RRC Idle and RRC Connected states. This significantly diminishes signaling overhead and connection latency, while conserving device battery power. However, conventional integrated BSs face practical challenges in fully leveraging the benefits of RRC Inactive mode. Since the user context is anchored within a single integrated BS, the UE typically needs to report its location changes to the network when moving beyond the coverage boundary of that BS, leading to increased signaling overhead and energy consumption. Additionally, the limited capacity of a single integrated BS, compared to the flexible capacity of a split CU-DU, limits the number of UEs that can be maintained in the RRC Inactive state.

The CU–DU split architecture effectively resolves these limitations. In this design, the centralized CU serves as the anchor point for user context. When a UE in RRC Inactive mode moves across DU boundaries within the same CU domain, the context remains anchored at the CU, eliminating the need for location updates by the UE. Furthermore, the elastic resource scalability of the CU enables it to support a larger number of RRC Inactive UEs across the network.

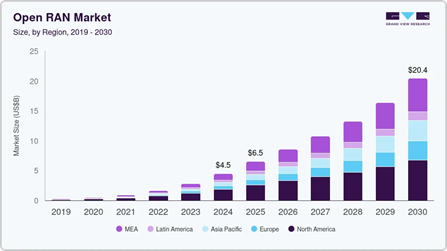

2.4 Multi-vendor support

The CU–DU split architecture with standardized F1/E1 interfaces [3] ensures interoperability among different network components from multiple vendors. This allows operators to selectively choose the most competitive components from different vendors, thereby fostering innovation and reducing dependency on single-source solutions. Multi-vendor CU–DU deployments have been successfully realized in several 5G commercial networks. For instance, Tier-1 operators in North America and Asia have successfully deployed CUs and DUs from different network vendors, demonstrating seamless integration, performance stability, and efficient operational management at scale. These pioneering deployments have validated the maturity and effectiveness of the open interface approach. The Open RAN (O-RAN) initiative [4], which promotes open and interoperable radio access networks, enables operators to deploy multi-vendor solutions and drive innovations in 5G and beyond. The O-RAN market is poised for exponential growth, with forecasts indicating substantial expansion across various regions [5], as depicted in Figure 3. The CU–DU split architecture is well-aligned with the O-RAN strategy, supporting interoperability, scalability, and flexibility in next-generation networks.

Figure 3. Market growth of Open RAN market over different regions

2.5 Smooth migration from 5G to 6G

The CU–DU split architecture also facilitates the reuse of existing hardware and site infrastructure, providing a smooth evolutionary path for 6G upgrades. By leveraging standardized interfaces and modular designs, operators can enhance current infrastructure, minimizing disruption and reducing costs during the transition. This approach allows the deployment of advanced 6G capabilities, making the migration process more efficient and future-proof.

3. Benefits of CU-DU split newly emphasized in 6G

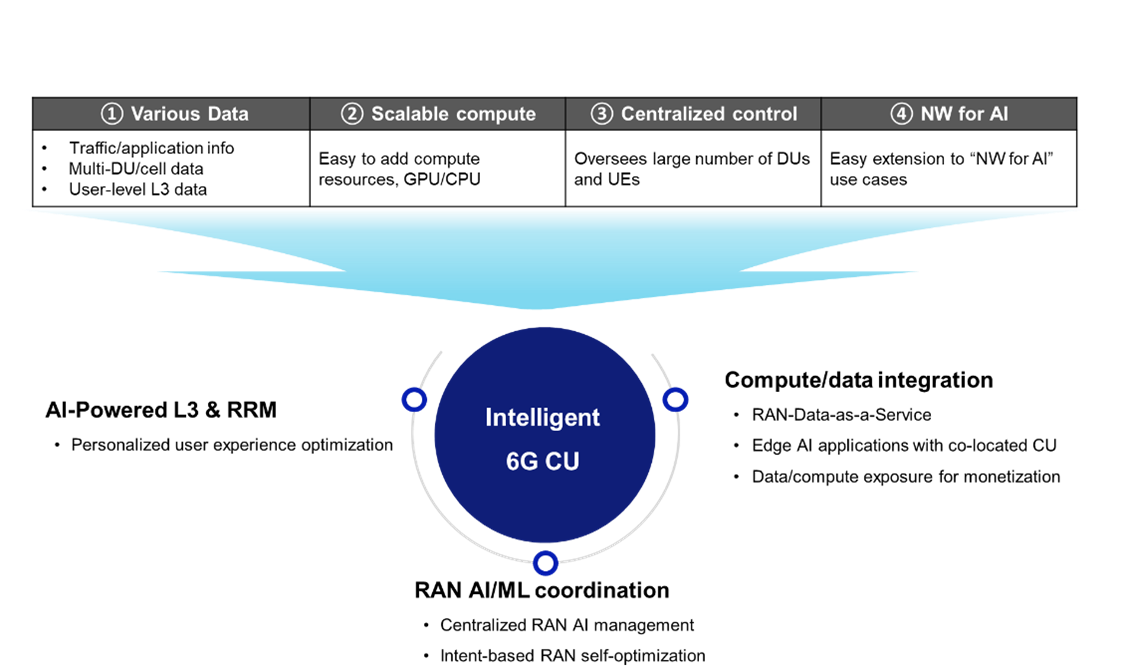

The CU–DU split architecture is strategically positioned for the evolution of 5G RAN to AI-native 6G RAN. In the 6G era, it is envisioned that the CU will evolve into an Intelligent CU, embedding AI/ML capabilities to enable large-scale RAN AI deployment and optimization. as shown in Figure 4. By leveraging its capabilities in data collection, scalable computing, network controllability, and flexibility, the Intelligent CU will underpin advanced AI-driven functionalities. The following sections highlight several key benefits of the CU-DU split architecture in 6G.

Figure 4. 6G CU for realizing AI-Native RAN

3.1 AI-native 6G RAN: AI-Powered L3 & Radio resource Management (RRM)

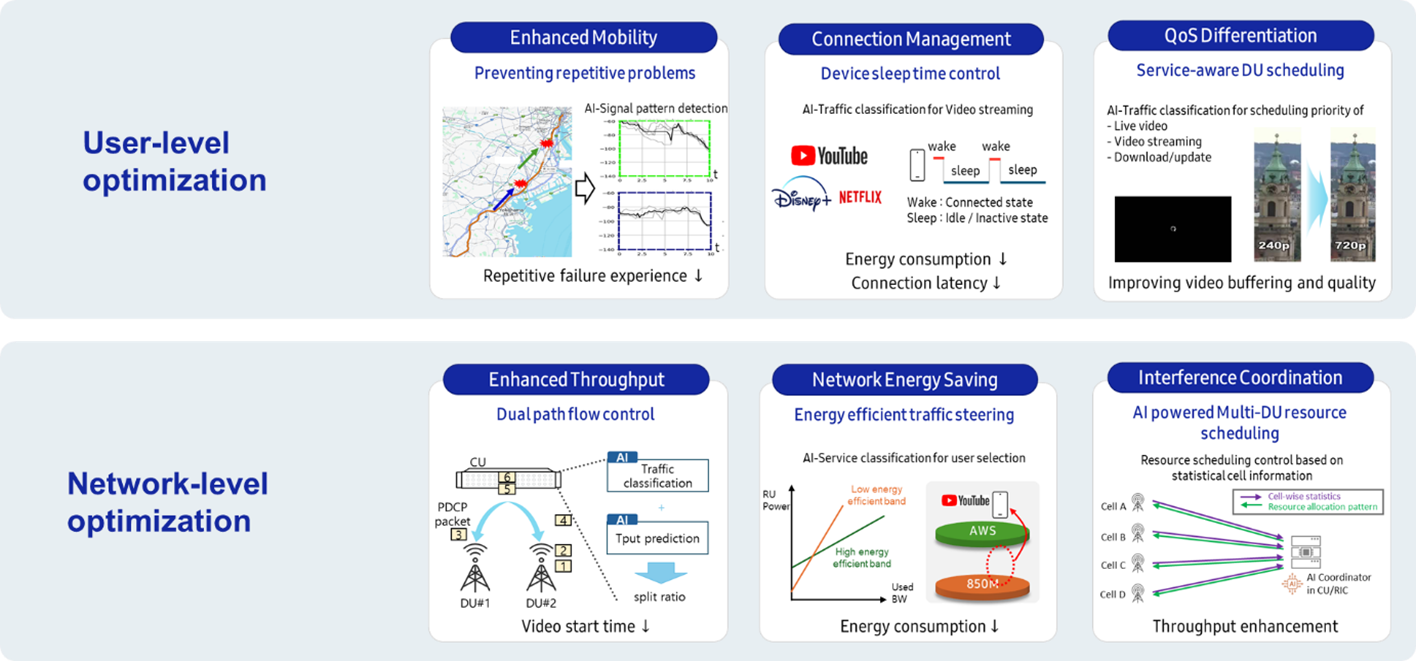

A plethora of existing L3 features in the CU are currently being enhanced, or are expected to be enhanced, with AI/ML support. Some examples of AI-L3 use cases for User-Level and Network-Level Optimizations are shown in Figure 5. The use cases include:

Figure 5. AI-L3 use cases

The number of AI-L3 use cases is expected to grow rapidly introducing increased computational demands for AI inference, training, and data analytics. The CU–DU split architecture provides an efficient framework to address this requirement by centralizing compute resources in a virtualized edge or regional data center. This approach accommodates the continuous increase in AI workloads without requiring modifications to field-distributed DUs. In an integrated BS architecture, each site needs to maintain its own compute resources for AI processing, leading to fragmented and redundant investment in additional servers, accelerators, and power provisioning. With the CU–DU split, operators can avoid these inefficiencies by simply expanding the centralized compute pool. This incremental investment model reduces over-provisioning risks and ensures cost-effective scalability.

3.2 AI-native 6G RAN: Adaptive RAN AI/ML coordination

3.2.1 Centralized RAN AI management

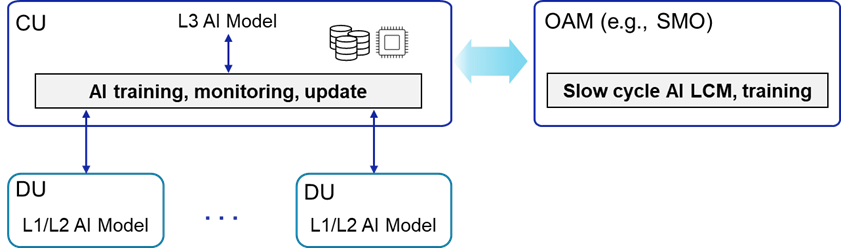

In conventional architectures, AI management functions, such as model training, monitoring, and deployment, are primarily executed in the Operations, Administration, and Maintenance (OAM) or Service Management and Orchestration (SMO) domain, outside the real-time RAN control loop. While this approach is suitable for many use cases, it lacks dynamic adaptability needed to adjust AI models in response to evolving network conditions. In contrast, the CU–DU split architecture introduces a more agile solution by integrating components of the AI management pipeline within the CU.

The CU can host critical functions like model onboarding, inference orchestration, training, and validation, as shown in Figure 6. With direct access to real-time data from multiple DUs, including per-cell load, interference levels, mobility events, and user experience metrics, the CU can monitor the performance of operational AI models. This enables the initiation of retraining or fine-tuning immediately upon detection of environmental changes. This hybrid approach combines AI management within the CU and traditional OAM/SMO control, providing better agility and responsiveness compared to systems relying solely on external domains. It allows real-time AI model adjustments, ensuring continuous alignment with dynamic network requirements.

Figure 6. Centralized RAN AI Management in CU–DU Split Architecture

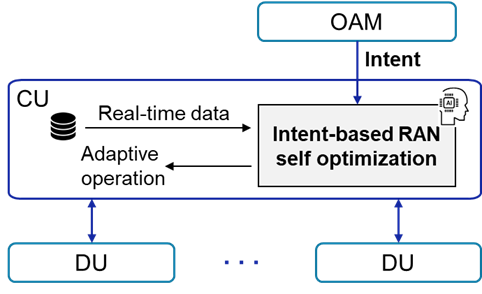

3.2.2 Intent-based RAN self-optimization

One of the defining paradigms of AI-native 6G RAN is the shift from rule-driven configuration to intent-based self-optimization, where the network autonomously fulfills high-level operational objectives provided by operators or orchestration functions. Instead of specifying individual parameters or control rules, the operator or service orchestrator provides an intent — such as maintaining a specific service quality within an energy budget — and the RAN automatically adjusts its behavior to achieve that goal, as shown in Figure 7.

The CU–DU split architecture provides a good foundation for realizing this concept at scale. The CU aggregates multi-DU, multi-cell, and user-level data across a wide service domain. By leveraging this rich data context, the CU can interpret intents, translate them into actionable RAN policies, and coordinate distributed actions across its connected DUs to realize the intent. Compared to the conventional integrated RAN architecture, the CU’s scalable compute resources and comprehensive observability enable continuous training, inference, and refinement of the intent-translation models. The CU evaluates real-time RAN performance, assesses deviations from target intents, and triggers self-optimization cycles accordingly.

Figure 7. Intent-based RAN self-optimization

3.3 New business and monetization opportunities with split RAN architecture

The AI-native CU–DU framework introduces new revenue models, positioning the RAN not only as a connectivity platform but also as an intelligent data and compute marketplace.

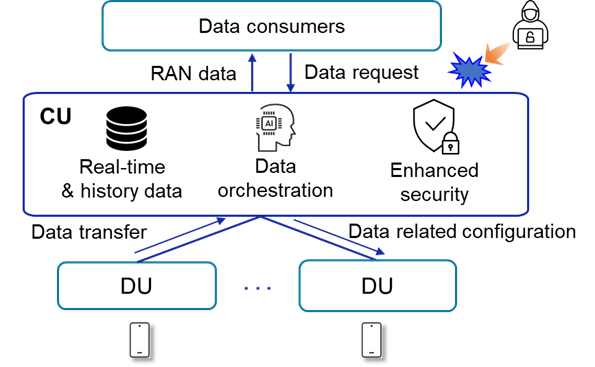

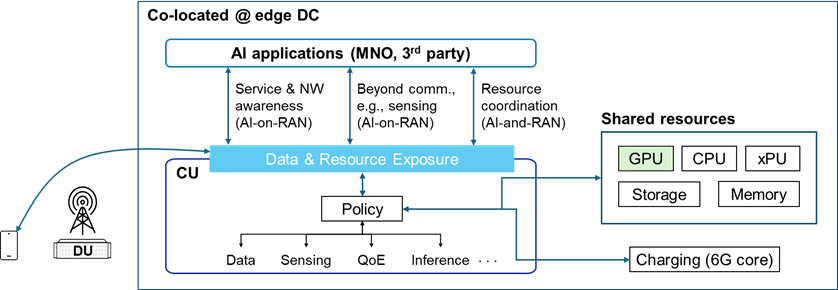

3.3.1. RAN-Data-as-a-Service: RAN data aggregation and exposure

In the 6G era, data is the fundamental enabler of intelligence across both communication and application domains. The efficiency and scalability of AI-native network operations depend on how effectively RAN data is collected, processed, and exposed for optimizing network operations or application service experiences. The CU–DU split architecture leverages the CU as the central aggregation and exposure point for RAN data, as shown in Figure 8. The CU naturally manages a vast amount of near real-time data from a wide range of UEs and networks, which cannot be easily collected in the OAM. This includes radio quality, interference measurements, traffic load statistics, and user-level context or status. Furthermore, data pre-processing — such as normalization, filtering, feature extraction, and anonymization — can improve data quality and readiness for immediate external exposure, fostering reliability and enabling value-added services for third-party partners.

Security and data governance are also critical for data handling. Sensitive user and operational data can be safeguarded within a controlled edge data center environment with unified access control, encryption, and auditing mechanisms. Instead of managing separate security frameworks at each cell site, operators can apply consistent data-related security policies within the CU domain, which streamlines compliance and risk management.

Figure 8. CU as an anchor for RAN data aggregation and exposure

3.3.2. Edge AI Application with Co-located CU

When the CU is co-located with edge AI applications, as in the AI-on-network model, the synergy becomes even stronger. The tight physical and logical proximity between the CU and AI workloads enables low-latency, high-bandwidth data exchange, allowing AI applications to directly consume pre-processed RAN information for inference, analytics, or real-time decision making. For example, an edge video analytics service with an AI agent can instantly optimize its own operation based on live network conditions exposed by the physically co-located CU. Conversely, the AI application can send operational feedback back to the CU, facilitating joint optimization between network and service layers. This architecture is illustrated in Figure 9.

Figure 9. Co-located CU and edge application

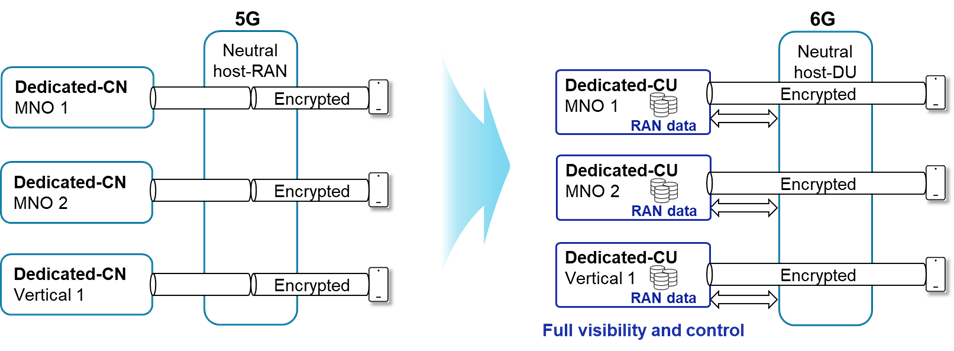

3.3.3 Flexible RAN Sharing as business enabler

The CU–DU split architecture can also provide business and operational flexibility, particularly in enabling diverse and adaptive forms of RAN sharing. As 6G networks expand to serve heterogeneous service providers, enterprises, and vertical industries, operators seek more granular and customizable sharing models that balance cost efficiency, security, autonomy, and data control. This functional disaggregation creates a natural framework for multi-operator cooperation at different architectural layers, enabling selective sharing based on strategic and commercial objectives.

In conventional monolithic RAN architectures, sharing is typically limited to coarse-grained models such as Multi-Operator Radio Access Network (MORAN) or Multi-Operator Core Network (MOCN), where control and ownership boundaries are rigid. This restricts operators’ ability to customize deployments according to business requirements or to maintain full control over customer data and operational behavior. By contrast, the CU–DU split enables fine-grained, sub-component-level sharing, allowing specific RAN functions, such as DUs, to be shared among multiple operators while others, such as CUs, remain operator-dedicated.

A neutral-host deployment scenario is illustrated in Figure 10, With this architecture, a third-party neutral host infrastructure provider can deploy and operate RUs and DUs situated in close proximity to the RU, offering shared radio access resources to multiple mobile operators. Each participating operator maintains its own dedicated CU hosted in its private edge data centers. This separation ensures that while the neutral party’s RAN investments in the local area are efficiently shared, sensitive subscriber data and encryption keys remain confined to the operator’s CU domain. This ensures compliance with privacy and regulatory requirements. Additionally, each operator retains full control over its CP policies, collected data, and other critical functions. The CU thus can act as the operator’s brain within a shared RAN environment, overseeing mobility management, QoS control, and data collection and exposure.

Figure 10. Flexible RAN sharing for neutral host scenario

4. Standardization and Ecosystem Alignment

The viability and ubiquity of 6G depend not only on technological innovation but also on industry-wide standardization and ecosystem alignment. Therefore, the study of the CU-DU split in 6G RAN architecture should be aligned with ongoing standardization and industry initiatives, including:

5. Conclusion

The CU–DU split RAN architecture represents a transformative enabler for realizing the 6G vision of AI-native, sustainable, trustworthy, and monetizable networks. Its inherent flexibility and scalability have already revolutionized 5G deployments, paving the way for open interfaces and adaptive infrastructure. To fully unlock its potential in 6G, this architecture must be formally defined and enhanced within the 6G research and standardization framework. By doing so, the industry can ensure that the CU–DU split architecture not only sustains its current benefits but also drives the next evolutionary leap in wireless technology, enabling a more intelligent, interconnected, and future-ready global ecosystem.

References

[1] Samsung Electronics, “Virtualized Radio Access Network; Architecture, key technologies and benefits,” April 2021.

[2] 3GPP, “NR; NR and NG-RAN Overall description; Stage 2,” 3GPP TS 38.300, v19.1.0, Jan. 2026.

[3] 3GPP, “NG-RAN; Architecture description,” 3GPP TS 38.401, v19.1.0, Dec. 2025.

[4] O-RAN Alliance, “O-RAN Alliance,” [Online]. Available: https://www.o-ran.org

[5] Grand View Research, “Open RAN Market Summary”, Available: https://www.grandviewresearch.com/industry-analysis/open-ran-market-report.

[6] 3rd Generation Partnership Project (3GPP), “3GPP,” [Online]. Available: https://www.3gpp.org

[7] ETSI Industry Specification Group on Experiential Networked Intelligence (ISG ENI), “ETSI ISG ENI,” [Online]. Available: https://www.etsi.org/technologies/eni

[8] ITU-T Focus Group on Autonomous Networks (FG-AN), “Autonomous Networks,” International Telecommunication Union (ITU), [Online]. Available: https://www.itu.int/en/ITU-T/focusgroups/an

[9] Open Network Automation Platform (ONAP), “ONAP,” The Linux Foundation, [Online]. Available: https://www.onap.org