AI

[INTERSPEECH 2022 Series #6] Multi-stage Progressive Compression of Conformer Transducer for On-device Speech Recognition

|

The 23rd Annual Conference of the International Speech Communication Association (INTERSPEECH 2022) was held in Incheon, South Korea. Hosted by the International Speech Communication Association (ISCA), INTERSPEECH is the world's largest conference on research and technology of spoken language understanding and processing. This year a total of 13 papers in Samsung Research’s R&D centers around the world were accepted at the INTERSPEECH 2022. In this relay series, we are introducing a summary of the 6 research papers. - Part 1 : Human Sound Classification based on Feature Fusion Method with Air and Bone Conducted Signal - Part 6: Multi-stage Progressive Compression of Conformer Transducer for On-device Speech Recognition |

Background

Automatic Speech Recognition (ASR) systems on smart devices have traditionally relied on server based models. This involves sending audio data to the server and receiving text hypothesis once the server model completes decoding. While this approach helps us leverage more computing power, it incurs increased latency. Having an on-device model not only reduces latency but is also more cost effective. A gradual shift towards End-to-End ASR systems has made on-device deployments more feasible, owing to a simpler architecture and better performance than traditional GMM-HMM based architectures [1-6]. Recurrent Neural Network Transducer (RNN-T), a sequence-to-sequence streaming model for ASR with low latency and better performance, has seen wider adoption for on-device deployments. The RNN-T model comprises a transcription network, a prediction network, and a joint network. The transcription network encodes the input audio using Long Short Term Memory (LSTM) cells. Recent works have demonstrated the effectiveness of Conformer blocks in efficiently learning local as well as global interactions. Hence, the transcription network of the RNN-T, which originally uses unidirectional LSTM blocks, can be replaced with conformer block forming a conformer-transducer model.

Smaller models for on-device deployments can’t be trained directly as the limited model capacity results in performance gap compared to larger models. Model compression techniques have been widely used to obtain smaller models with minimal degradation in performance compared to larger models. Knowledge distillation (KD) is a popular model compression technique that has recently been successful across different domains like natural language processing, computer vision, and speech recognition. In this technique, knowledge is distilled from a larger model (teacher) to a smaller model (student). In our earlier work [7], KD was used successfully to achieve a high compression rate with RNN-T based two-pass ASR model.

Using a much larger teacher model to distil a smaller target student model can be one avenue to improve the performance of a student, leveraging the rich knowledge learned by the teacher. However, our experimental observations and recent works suggest that a higher gap in sizes tend to impair the model performance, even though the larger teacher performs significantly better than a smaller teacher. To bridge this performance gap, we propose a multi-stage progressive compression for the conformer-transducer model. In Stage 1, we use a large teacher model and compress it using KD such that it does not degrade significantly. In the next stage, the student model obtained in Stage 1 serves as the teacher model for the current stage and we distil a new student model for this stage. This process is repeated multiple times till a student model with the desirable size is achieved. The final student model obtained can then be used for on-device deployment. In this blog post, we introduce our work (INTERSPEECH 2022) which aims to utilize a multi-stage progressive approach to compress the conformer transducer model using KD.

Multi-stage Knowledge Distillation for Conformer Transducer

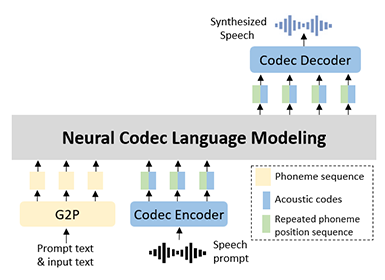

The conformer block includes a feed-forward module, followed by a convolutional network, a multi-headed self-attention module, and finally another feed-forward module. With this architecture, attention module helps in learning long-range global interactions well and the convolutional module exploits the local features. Mathematically, we can represent the conformer block i with input xi and output yi as follows:

where FF refers to the feed-forward module, Conv refers to the convolutional module, and MA refers to the multi-headed self-attention module. The output obtained from the conformer blocks are the higher-level representations that are fed to the joint network of the transducer.

RNN-Transducer, a streaming sequence-to-sequence architecture, includes a transcription, a prediction, and a joint network. The transcription network takes audio as the input. The prediction network, which is an autoregressive network, takes the previous output as input. They produce higher-level representations that are fed to the joint network (feed-forward network) to predict the posterior probability of the current token. In this work, we use the conformer blocks instead of LSTMs as the transcription network.

Knowledge distillation aims at harnessing the rich information that a large model learns, into a smaller model without significant performance degradation. In knowledge distillation, a teacher-student architecture is used. The output probability distribution (soft targets) represents the knowledge of the teacher model. To enable a student to make use of this, we minimize the KL-Divergence between the soft targets of the teacher and the student model. In our case, the KL-Divergence between the output of the joint network of the teacher and that of the student is minimized. If k represents the output label and (t, u) is the lattice node, the KL-Divergence loss can be represented as follows

Let α be the distillation loss weight. Then, the total loss for training a conformer transducer student model from a conformer transducer teacher model is given as follows:

The use of a larger and better-performing teacher model should be beneficial. Yet, if the compression rate increases beyond a limit, existing works [8] and even our experimental observations indicate a sharp degradation. It is likely that the complex relations learned by the larger teacher model are too difficult for a smaller student model to learn with low model capacity. As a model compressed using KD outperforms a model of the same size trained from scratch, it is desirable to use a KD-compressed model. Hence, we compress a large streaming teacher model using knowledge distillation in multiple stages to attain a better-performing streaming student model. Figure 1 gives a schematic representation of the proposed solution. In the first stage, we compress the teacher model at a compression rate that does not hurt its performance beyond a tolerable limit. We obtain an intermediate high performing student model which has the potential to be compressed further. In the next stage, we compress the student model from the previous stage (which serves as the teacher for the next stage) to attain a smaller student model. This process can be performed progressively for a number of stages till the required model size is achieved. The intuition behind this is to not lose the complex interactions learned by the larger teacher by adopting a progressive compression approach, as direct compression does not allow the student to learn these interactions.

Figure 1. Multi-stage progressive compression of conformer transducer model. The student model distilled from teacher in the first stage, is used as the teacher model for the next stage. This cascaded approach can be continued for N stages.

Experiments

The dataset used for this work is the standard LibriSpeech dataset which contains 960 hours of training data. Models are evaluated on the test-clean set and test-other set. The features used are 40-dimensional Mel Frequency Cepstral Coefficients (MFCCs) which are calculated from a 25 msec window for every 10 msec shift and are passed as an input to the transcription network. The target vocab consists of 10026 Byte Pair Encoding (BPE) units. We use SpecAugment technique for data augmentation during training. The beam size of 8 is used for decoding.

The specifications of each of the teacher and student models used can be found in Table 1. Other attributes remain the same across all models which are discussed next in this section. The entire training pipeline is developed using Tensorflow 2. A dropout of 0.1 is used succeeding every layer other than the first one. Max-pooling of 2 for the first three layers (and an overall factor of 8) is used which helps in shortening the training time and latency of the model. To reduce the number of parameters and regularize our model, the same neural network serves as the joint network and the embedding layer in the prediction network. We use an initial learning rate of 0.0001 and utilize decay function as the training progresses. For knowledge distillation, our preliminary experiments show that a distillation loss weight of 0.02 gives the best results. For all our experiments, a temperature of 1.0 has been used for both, the teacher and the student models.

Table 1. Data Multi-stage progressive compression experiment results, including number of model parameters (in million) (rounded off to whole number), compression (Comp) percentage (rounded off to whole number), WERs and SERs for test-clean and test-other LibriSpeech datasets. In experiment 1, we compress 128M params teacher model to 46M params student model in three stages. In experiment 2, we compress 128M params teacher model to 24M params student model in two stages.

We denote a teacher model with T and a student model with S. For a detailed comparison, we have also trained baselines models B with the same configuration as the student models, trained from scratch. Thus, T and B are models trained without knowledge distillation. A subscript acts as identification between different models of the same type. Table 1 includes the experiments performed, their model sizes in number of parameters in million (M), the compression percentages (%), and the evaluation metrics - Word Error Rate (WER) and Sentence Error Rate (SER). The Ti = Sj indicates that Sj now serves as Ti and both are the same models.

Table 1 and Figure 2 highlight results of multi-stage progressive compression approach where we perform two sets of experiments. In experiment 1, we compress a 128M parameters teacher model to a 46M parameters student model in three stages progressively. The 128M teacher model has 16 encoder layers with 512 dimensions, 4 attention heads, and 2 decoder layers with 1024 dimensions. In each stage, we have kept the compression rate below 50% compared to the teacher model size in that stage. It can be observed that in Stage 1, we obtained the student model S1 (distilled from T1) with better WER than the teacher and 38% smaller in size. For comparison, we also trained a baseline model B1 (same configuration model but trained from scratch without KD). As expected, B1 is found to be worse than the student model S1.

Figure 2. Results for multi-stage KD compression of conformer transducer Experiment 1 (compression rate of under 50% in each stage) on test-clean / test-other sets. Ti, Sj , and Bk represent teacher, student, and baseline models respectively.

In Stage 2 of multi-stage progressive KD, the S1 model is used as the teacher model T2. Even here, we observe that our student model S3 has a WER almost the same as that of the teacher while being 23% smaller than T2. Again, clearly student model S3 outperforms B2 and S2 (distilled from T1). It is worth noticing that, compared to the T1, our student model S3 trained through the proposed progressive KD has been compressed by 52% with no loss in WER performance.

For Stage 3, S3 model now acts as the teacher model T3. We went a step ahead and compressed the model by 25% which brings the model size to 46M parameters. This time we observe 11% and 8.5% relative degradation in WER on test-clean and test-other respectively. This minor degradation in WER is reasonable given a high compression rate of 64% with respect to original teacher model T1. Also, when compared to the baseline B3 model, the student S4 and S5 performs consistently better again. S5 performing better than S4 shows that the student model obtained using our multi-stage progressive compression technique produces the most superior results. Compared to original teacher model T1, the minor relative degradations of 10% and 7.5% in WERs on test-clean and test-other datasets are observed. At the same time, a significantly high compression rate of 64% compared to the teacher model is achieved.

In order to experiment with still higher compression rates (beyond 55%), we performed experiment 2 which uses two stages. The results of this set of experiments are shown in bottom row of Table 1 and in Figure 3. For Stage 1, we use the same 128M parameters teacher T1. We distil a student model S6 from T1 with a compression rate of 55% and observe minor degradation of approximately 4% and 3% (relative) in WER on the test-clean and test-other datasets respectively. Also, we observe that the performance of the baseline B4 is significantly worse.

For Stage 2, we compress the teacher model T4 (i.e. S6) by 58% to obtain a model with only 24M parameters. While S7 performs better than the B5, it is marginally worse than S8. We get a degradation in WER of about 24% (relative) on both test-clean and test-other. This degradation in WER is expected given a high compression rate of 81% with respect to T1.

Table 2. Higher compression rates on 128M param teacher model using single stage knowledge distillation

Figure 3. Results for multi-stage KD compression of conformer transducer Experiment 2 (compression rate of over 50% in each stage) on test-clean / test-other sets. Ti, Sj , and Bk represent teacher, student, and baseline models respectively.

Conclusion

In this work, we propose a multi-stage progressive approach to compress conformer transducer model using knowledge distillation. We start with a 128M parameters streaming teacher model and distill a smaller streaming model without any degradation in performance of the model. In the next stage, this student model is used as the teacher model to obtain a smaller model for KD in this stage. Using this cascaded approach, we obtain two models: a 46M parameter model with a compression of 64% in three stages, and a 24M parameter model with a compression of 81% in two stages. The former shows a minor degradation, yielding 10% and 7.5% relative in WERs on LibriSpeech’s test-clean and test-other datasets respectively. These compression rates are good enough for medium-end mobile devices. Further, we also demonstrate the effects of single stage compression of a large teacher and share our insights.

Link to the paper

https://www.isca-speech.org/archive/pdfs/interspeech_2022/rathod22_interspeech.pdfReferences

[1] Y. He, T. N. Sainath, R. Prabhavalkar, I. McGraw, R. Alvarez, D. Zhao, D. Rybach, A. Kannan, Y. Wu, R. Pang et al., “Streaming end-to-end speech recognition for mobile devices,” in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2019, pp. 6381–6385.

[2] T. N. Sainath, Y. He, B. Li, A. Narayanan, R. Pang, A. Bruguier, S.-y. Chang, W. Li, R. Alvarez, Z. Chen et al., “A streaming on-device end-to-end model surpassing server-side conventional model quality and latency,” in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2020, pp.6059–6063.

[3] K. Kim, K. Lee, D. Gowda, J. Park, S. Kim, S. Jin, Y.-Y. Lee, J. Yeo, D. Kim, S. Jung et al., “Attention based on-device streaming speech recognition with large speech corpus,” in IEEE Automatic Speech Recognition and Understanding Workshop (ASRU), 2019, pp. 956–963.

[4] A. Garg, D. Gowda, A. Kumar, K. Kim, M. Kumar, and C. Kim, “Improved Multi-Stage Training of Online Attention based Encoder-Decoder Models,” in IEEE Automatic Speech Recognition and Understanding Workshop (ASRU), 2019, pp. 70–77.

[5] C. Kim, S. Kim, K. Kim, M. Kumar, J. Kim, K. Lee, C. Han, A. Garg, E. Kim, M. Shin, S. Singh, L. Heck, and D. Gowda, “End-to-end training of a large vocabulary end-to-end speech recognition system,” in IEEE Automatic Speech Recognition and Understanding Workshop (ASRU), 2019, pp. 562–569.

[6] A. Garg, G. Vadisetti, D. Gowda, S. Jin, A. Jayasimha, Y. Han, J. Kim, J. Park, K. Kim, S. Kim, Y. Lee, K. Min, and C. Kim, “Streaming on-device end-to-end ASR system for privacy-sensitive voicetyping,” in Interspeech, 2020, pp. 3371–3375.

[7] N. Dawalatabad, T. Vatsal, A. Gupta, S. Kim, S. Singh, D. Gowda, and C. Kim, “Two-Pass End-to-End ASR Model Compression,” in IEEE Automatic Speech Recognition and Understanding Workshop (ASRU), 2021, pp. 403–410

[8] S. I. Mirzadeh, M. Farajtabar, A. Li, N. Levine, A. Matsukawa, and H. Ghasemzadeh, “Improved knowledge distillation via teacher assistant,” in Proceedings of the AAAI Conference on Artificial Intelligence, vol. 34, no. 04, 2020, pp. 5191–5198.