AI

[INTERSPEECH 2022 Series #3] Bunched LPCNet2: Efficient Neural Vocoders Covering Devices from Cloud to Edge

|

The 23rd Annual Conference of the International Speech Communication Association (INTERSPEECH 2022) was held in Incheon, South Korea. Hosted by the International Speech Communication Association (ISCA), INTERSPEECH is the world's largest conference on research and technology of spoken language understanding and processing. This year a total of 13 papers in Samsung Research’s R&D centers around the world were accepted at the INTERSPEECH 2022. In this relay series, we are introducing a summary of the 6 research papers. - Part 1 : Human Sound Classification based on Feature Fusion Method with Air and Bone Conducted Signal - Part 3: Bunched LPCNet2: Efficient Neural Vocoders Covering Devices from Cloud to Edge (by Samsung Research) - Part 5: Prototypical Speaker-Interference Loss for Target Voice Separation Using Non-Parallel Audio Samples |

Background

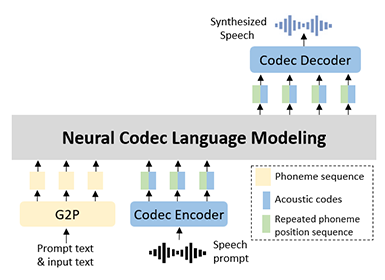

Nowadays, as many types of edge devices emerge, people utilize text-to-speech (TTS) services in their daily life without device-constraints. Although most TTS systems now have been launched on cloud servers, running on edge devices resolves significant concerns, such as latency, privacy, and internet connectivity issues. To deploy TTS services on these devices optimizing the performance within a given resource is crucial. Additionally, expanding the service capability while maintaining the architecture is worthwhile instead of developing new architectures for specific devices. For this purpose, FBWave [1] proposed a flow-based scalable vocoder architecture to easily control the computational cost. However, it demonstrated an insufficient speech quality in cloud servers, even in high-quality mode.

We address this issue with an efficient auto regressive (AR) vocoder. Non-AR approaches which work in parallel on GPUs have also been attempted [2-4], but few edge devices have a GPU. In addition, the AR vocoder has the advantage of providing a streaming functionality with low latency. From this point of view, LPCNet [5] is a light-weight vocoder based on AR architecture that is suitable for on-device inference. To the best of our knowledge, LPCNet is still one of the best candidates that can efficiently generate speech on a CPU, even with a high quality. Many LPCNet variants have been introduced [6-9] to reduce complexity without sacrificing speech quality. However, the variants are unsatisfactory for low-resource edge devices in that they target high-end devices, such as smart phones. A quality drop is also observed by shrinking the network capacity for low-resource devices.

In this blog post, we introduce our works, Bunched LPCNet1 and Bunched LPCNet2 which published at INTERSPEECH2020 and INTERSPEECH2022, respectively. We propose an improved LPCNet with highly efficient performance and small model footprint to cover a wide range of devices, from cloud servers to low-resource edge devices.

Bunched LPCNet1

Figure 1. Original LPCNet architecture

The original LPCNet [5], as depicted in Figure 1, keeps the computational complexity low by using an all-pole LPC filter [10] for modeling the vocal tract response and a small neural network for predicting the excitation signals. It comprises a frame rate network (FRN) that runs once per input frame, and a sample rate network (SRN) that runs N (frame size) times per frame generating one audio sample per inference. As a result, most of the computational burden resides in the SRN.

Figure 2. Bunched LPCNet architecture

Bunched LPCNet1 architecture introduced Sample Bunching and Bit Bunching techniques to further reduce the complexity of the original LPCNet, but we briefly present only the Sample Bunching concept in this blog. The key idea with sample bunching is to get the SRN to generate more than one sample (called a bunch herewith) per inference thereby allowing it to run fewer times resulting in a reduction of computational cost. We maintain the auto-regressive nature of LPCNet in conditioning the current output on past outputs and thereby enabling the maintenance of audio quality beyond bunch size of 2. The bunching approach allows multi-sample generation on any hardware including low-end CPUs.

Our proposition is that the GRUs in the SRN have sufficient model capacity to generate a bunch of samples. As seen in Figure 2, the SRN shares the GRU layers for all the samples in the bunch and has an individual dual FC layer for each excitation prediction in the bunch.The input to the dual FC layer for the first excitation is conditioned only on the output from GRUb while for the rest, it is also conditioned on the previous excitations within the bunch via embedding feeds,

while for the rest, it is also conditioned on the previous excitations within the bunch via embedding feeds,

.

.

To verify the efficacy of the bunching approach in complexity reduction,

we compare the validation loss of the proposed system and the baseline system with smaller GRUA units  in Figure 3.

Sample bunching shows lower complexity for the same validation loss.

It suggests that the proposed approach is a more efficient method than reducing the units in the RNN layers.

in Figure 3.

Sample bunching shows lower complexity for the same validation loss.

It suggests that the proposed approach is a more efficient method than reducing the units in the RNN layers.

Figure 3. Validation loss versus RTF (baseline systems with varying M_A and sample bunching systems)

Bunched LPCNet2

Sample Bunching are sufficiently efficient for application in mobile devices, e.g., a smartphone. However, several limitations exist for further reducing computations for low-resource devices. (1) Sample Bunching reduces the computations of GRUs by 1/S times. This indicates that as the bunch size S increases, the amount of computational reduction is saturated, especially where S>4. (2) Reducing the number of GRUA units with Bunching techniques significantly degrades the speech quality. Note that GRUA is the most computationally expensive layer in LPCNet. (3) The model footprint becomes large because of the embedding tables corresponding to the feedback samples. To overcome these problems, we improved the Bunched LPCNet architecture with the three contributions.

1. Single Logistic Output Layer that Extends the Coverage of Bunched LPCNet to Low-Resource Constraints.

Auto-regressive vocoders generate waveforms by sampling from the probability density function with non-parametric or parametric distributions. Non-parametric methods, e.g., a softmax output layer, can model complex distributions and show a good performance in modeling waveforms [5,11], but the computations in the output layer and sampling process from a categorical distribution are expensive by a large output dimension; for example, 256 for an 8-bits μ-law quantization [12]. Additionally, its speech quality is significantly degraded when the model has insufficient capacity. Accordingly, we attempted to employ parametric distributions and found that a logistic output layer, particularly with single mixture, is a good alternative in terms of efficiency.

We compare the mel-cepstral distances (MCD) [13] of single logistic (SL) to the softmax output layer with the smaller bunch size Sand GRUA units  in Figure 4. As the model capacity decreases, the SL layers work more efficiently than the softmax layers. We believe that a non-parametric loss could lead the network to predict complex distributions beyond the model capacity. That is, it could cause an under-fitting problem when the model capacity is too small. In contrast, a unimodal parametric distribution simplifies an objective and lowers the training difficulty by reducing the burden on the network. It can mitigate the quality reduction despite an insufficient capacity.

in Figure 4. As the model capacity decreases, the SL layers work more efficiently than the softmax layers. We believe that a non-parametric loss could lead the network to predict complex distributions beyond the model capacity. That is, it could cause an under-fitting problem when the model capacity is too small. In contrast, a unimodal parametric distribution simplifies an objective and lowers the training difficulty by reducing the burden on the network. It can mitigate the quality reduction despite an insufficient capacity.

Figure 4. MCD plots of softmax and single logistic output layer

Figure 5 presents our network architecture for the SL layer and the details are omitted in this post. Employing the deep and narrow layers, the proposed architecture demonstrates a better performance while satisfying a lower complexity than the softmax layer. Moreover, the sampling process of the SL layer is undoubtedly simple and computationally cheap using only scalar operations.

Figure 5. Network architecture for single logistic output layer

2. Dual-rate LPCNet for Computational and Maintenance Cost Reduction.

For low-resource devices, a more compact model would be required. However, a model with low capacity inevitably generates annoying sounds, e.g., noisy or muffled sounds. Reducing the sampling rate could be a solution of this problem.

By the way, a prosody model of a TTS system requires continuous maintenance, even after deployed, so that the pronunciation errors or unnatural prosody for given texts can be fixed. In other words, employing an additional prosody model for a low sampling rate vocoder leads to a maintenance cost increase. For this reason, we propose a DualRate LPCNet, which is an architecture that provides two sampling rates (24 kHz and 16 kHz) from one prosody model, as depicted in Figure 6.

Figure 6. Training / inference procedure of DualRate LPCNet

The feature conversion block computes F'16, which is the input of 16 kHz LPCNet, to remove unnecessary information from F24 and obtain higher mutual information with a target x16. Using the 16 kHz LPCNet trained with the converted F`16, we can save on both computational and maintenance costs. Similarly, the TTS systems for given devices can be easily deployed by choosing suitable LPCNet models with various complexities.

3. Insightful Tricks that Significantly Reduce the Model Footprint.

To optimize the model footprint, we attempted to feedback the scalar values into GRUA.

The first method worth considering is to feedback the scalar values directly without the embedding table.

However, this wastes the model capacity to transform the scalar values into the desired representation from the network.

To feedback desired values into the network without wasting model capacity,

a nonlinear mapping function from a quantized value to a continuous value would be useful,

which is the embedding table with  where

where  denotes the embedding dimension corresponding to the feedback samples.

This could degrade the speech quality compared to when

denotes the embedding dimension corresponding to the feedback samples.

This could degrade the speech quality compared to when  =128.

However, we determined that it has a small effect on the performance, whereas the size of the embedding table can be significantly reduced.

=128.

However, we determined that it has a small effect on the performance, whereas the size of the embedding table can be significantly reduced.

Experiments

The several presets of hyper parameters were chosen empirically to satisfy various device specifications as summarized in Table 1, compared with Bunched LPCNet 1.

We attempted to control the complexity of the models using S and  and determined an output layer to efficiently work for a given model capacity.

and determined an output layer to efficiently work for a given model capacity.

Table 1. Evaluation system configurations

For the quality evaluation, we conducted mean opinion score (MOS) tests [14] with a Tacotron variant prosody model. For the complexity evaluation, we measured the RTF (Real Time Factor) on two devices: 1) an AWS c5.4xlarge instance (Intel Xeon Platinum 8124M CPU @ 3.00GHz) with Ubuntu 18.04 - representing a cloud device and 2) a Raspberry Pi (RPi) 3B v1.2 (BCM2837 @ 1.20 GHz) with Tizen 6.5 - representing an edge device. Table 2 presents the evaluation results.

Table 2. MOS with 95% confidence intervals and real time factor on each CPU architecture

The B-LPCNet2 systems with an SL layer demonstrated a highly efficient performance. The B-LPCNet2-L generated sufficiently high fidelity speech for cloud TTS. The B-LPCNet2-R achieved a lower complexity than the B-LPCNet. The B-LPCNet2-S and B-LPCNet2-S16 works 7.7x and 10.9x faster than the B-LPCNet2-L, respectively, on the RPi while maintaining a satisfactory speech quality. Employing them with a lightweight prosody model, a real-time TTS system is deployable on low-resource edge devices.

The systems with  =128,including B-LPCNet,

has a relatively large number of parameters. This results from the model size of Bunched LPCNet highly depending on the bunch size, embedding dimension, and GRUA

units. In contrast, the B-LPCNet2 architectures with an SL layer show significantly small footprints of approximately 1.1 MB.

=128,including B-LPCNet,

has a relatively large number of parameters. This results from the model size of Bunched LPCNet highly depending on the bunch size, embedding dimension, and GRUA

units. In contrast, the B-LPCNet2 architectures with an SL layer show significantly small footprints of approximately 1.1 MB.

Conclusion

In this blog post, we introduced Bunched LPCNet2, which is a highly efficient neural vocoder architecture, and some presets to provide high-fidelity real-time TTS service to satisfy various device specifications, as confirmed by the MOS tests and RTF evaluations. We also investigated a simple technique that can significantly reduce the model size without degrading the speech quality. Compared with our previous study, B-LPCNet2-R achieved better speech quality and lower complexity with a model size of only 1.1 MB. In terms of quality, complexity, and model footprint, Bunched LPCNet2 would be the best candidate for low-resource edge devices, such as smart watches, wireless earphones, and home assistant devices.

Link to the audio samples

https://srtts.github.io/References

[1]. B. Wu, Q. He, P. Zhang, T. Koehler, K. Keutzer, and P. Vajda, “Fbwave: Efficient and scalable neural vocoders for streaming text-to-speech on the edge,” arXiv preprint arXiv:2011.12985, 2020.

[2]. R. Prenger, R. Valle, and B. Catanzaro, “Waveglow: A flow-based generative network for speech synthesis,” in Proc. of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 3617–3621, 2019.

[3]. K. Kumar, R. Kumar, T. de Boissiere, L. Gestin, W. Z. Teoh, J. Sotelo, A. de Brebisson, Y. Bengio, and A. C. Courville, “Mel-gan: Generative adversarial networks for conditional waveform synthesis,” in Proc. of Advances in Neural Information Processing Systems, 2019.

[4]. J. Kong, J. Kim, and J. Bae, “Hifi-gan: Generative adversarial networks for efficient and high fidelity speech synthesis,” in Proc. Of Advances in Neural Information Processing Systems, 2020.

[5]. J.-M. Valin and J. Skoglund, “LPCNet: Improving Neural Speech Synthesis through Linear Prediction,” in Proc. of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 5891–5895, 2019.

[6]. M.-J. Hwang, E. Song, R. Yamamoto, F. Soong, and H.-G. Kang, “Improving lpcnet-based text-to-speech with linear prediction-structured mixture density network,” in Proc. of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 7219–7223, 2020.

[7]. K. Matsubara, T. Okamoto, R. Takashima, T. Takiguchi, T. Toda, Y. Shiga, and H. Kawai, “Full-band lpcnet: A real-time neural vocoder for 48 khz audio with a cpu,” IEEE Access, vol. 9, pp. 94923–94 933, 2021.

[8]. V. Popov, M. Kudinov, and T. Sadekova, “Gaussian lpcnet for multisample speech synthesis,” in Proc. of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 6204–6208, 2020.

[9]. Y. Cui, X. Wang, L. He, and F. K. Soong, “An efficient subband linear prediction for lpcnet-based neural synthesis.” in Proc. of INTERSPEECH, pp. 3555–3559, 2020.

[10]. J. Makhoul, “Linear prediction: A tutorial review,” Proceedings of the IEEE, vol. 63, no. 4, pp. 561–580, 1975.

[11]. A. v. d. Oord, S. Dieleman, H. Zen, K. Simonyan, O. Vinyals, A. Graves, N. Kalchbrenner, A. Senior, and K. Kavukcuoglu, “Wavenet: A generative model for raw audio,” arXiv preprint arXiv:1609.03499, 2016.

[12]. ITU-T. Recommendation G. 711., Pulse Code Modulation (PCM) of voice frequencies, 1988.

[13]. R. Kubichek, “Mel-cepstral distance measure for objective speech quality assessment,” in Proc. of IEEE Pacific Rim Conference on Communications Computers and Signal Processing, vol. 1, pp. 125–128, 1993.

[14]. ITU-T. Recommendation P. 800, Methods for subjective determination of transmission quality, 1996.