AI

[INTERSPEECH 2022 Series #4] Cross-Modal Decision Regularization for Simultaneous Speech Translation

|

The 23rd Annual Conference of the International Speech Communication Association (INTERSPEECH 2022) was held in Incheon, South Korea. Hosted by the International Speech Communication Association (ISCA), INTERSPEECH is the world's largest conference on research and technology of spoken language understanding and processing. This year a total of 13 papers in Samsung Research’s R&D centers around the world were accepted at the INTERSPEECH 2022. In this relay series, we are introducing a summary of the 6 research papers. - Part 1 : Human Sound Classification based on Feature Fusion Method with Air and Bone Conducted Signal - Part 4: Cross-Modal Decision Regularization for Simultaneous Speech Translation (by Samsung Research) - Part 5: Prototypical Speaker-Interference Loss for Target Voice Separation Using Non-Parallel Audio Samples |

Background

In today’s world of virtual meetings, conferences, and multi-media, automatic speech translation offers a wide variety of applications. Traditional offline speech translation models used a cascade of speech recognition and text translation. In our prior works [1], we developed efficient techniques for end-to-end speech translation which outperforms traditional cascaded approaches.

From the customer's perspective, simultaneous speech translation offers much more exciting use cases than offline translation. It has the potential to enable real-time communication between speakers of different languages. However, this task still remains largely unresolved. On top of translating from one language to another, these systems also need to make read/write decisions, i.e., they need to decide when to read and when to produce the output based on the information contained in the input. This poses modeling issues that cannot be tackled by existing translation models such as the Transformer.

Recent approaches [2] use Monotonic Multi-head Attention (MMA) model for the simultaneous speech translation task. We have been working to improve various aspects of MMA-based simultaneous translation. In one of our works [3], we integrated external knowledge into MMA using a language model.

While analyzing these in-house systems, we observe differences in the performance and decision policy of simultaneous speech translation models and simultaneous text translation models. In this blog post, we introduce our work (INTERSPEECH 2022) which aims to utilize simultaneous text translation models to improve simultaneous speech translation performance.

Cross-Modal Decision Regularization

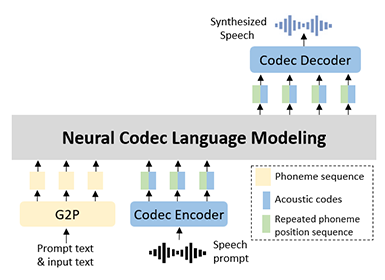

Figure 1. An example of using simultaneous text translation decision to improve simultaneous speech translation for English-German translation

For MMA-based simultaneous speech translation model, read/write decisions are guided by the monotonic attention energies learned during training. In the absence of direct supervision for the decision policy, the MMA model learns these decisions by balancing the trade-off between output accuracy and latency. These read/write decisions depend on the amount of information contained in the source sequence and the word orders of the source/target languages. Hence, the decision policy for speech and text inputs remains the same. Moreover, due to the relative complexity associated with speech inputs, it is easier to learn the read/write decisions from the text data. Hence, simultaneous text translation decisions can potentially serve as a reference to improve the simultaneous speech translation decision policy as shown in Figure 1.

We propose Cross-Modal Decision Regularization (CMDR) which utilizes the monotonic attention energies of the Simultaneous text translation model to guide the decision policy of the simultaneous speech translation model implicitly. We also extend several techniques from the offline speech translation domain, such as multitask learning, online knowledge distillation and cross-attentive regularization to the simultaneous speech translation task. Experiments on the MuST-C EnDe dataset show that the proposed CMDR, along with other approaches improves the performance of MMA-based simultaneous speech translation systems significantly.

For MMA, the read/write decision policy of the simultaneous translation model is controlled by the monotonic attention. CMDR aims to improve the simultaneous speech translation decision policy using the monotonic energy activation of the simultaneous text translation model. It computes the similarity between the monotonic energies of speech and text input corresponding to each training example. However, it cannot be computed directly since the attention energies corresponding to speech

(As) and text (At) have different sizes due to different input lengths. Similar to [4], we use self-attention and cross-attention operations with respect to At to obtain attention representations

At→t and As→t which have the same size. Finding the  distance between these reconstructed representations provides the required cross-modal similarity metric for each example.

distance between these reconstructed representations provides the required cross-modal similarity metric for each example.

During joint training of simultaneous speech translation and simultaneous text translation, each training example consists of input speech and corresponding transcript in the source language and output text in the target language. Let K and L denote the length of speech and text representations at the output of the encoder. The monotonic attention energy matrices for speech and text for the  head are defined as follows:

head are defined as follows:

where  refers to the monotonic attention corresponding to the

refers to the monotonic attention corresponding to the  encoder output token, aggregated across all decoder indices. Attention energies from H different heads are stacked as follows:

encoder output token, aggregated across all decoder indices. Attention energies from H different heads are stacked as follows:

Next, similarity matrix S is used to obtain As→t via cross-attention between As and At. Similarly, At→t is obtained from At by using self-attention.

For the  decoder layer, the CMDR loss is computed as follows:

decoder layer, the CMDR loss is computed as follows:

where sg (stop gradient operator) allows the model to use text attention as a reference for the speech attention. Finally, the CMDR loss is computed by averaging across M decoder layers.

In addition to the proposed CMDR approach, this work also extends several existing techniques from the offline speech translation domain. Firstly, it employs multitask learning by training simultaneous text translation model along with simultaneous speech translation. It also extends online KD and CAR losses to simultaneous speech translation. Finally, similar to other MMA-based translation systems, it uses differentiable average lagging (DAL) [5] loss to control the latency of simultaneous translation models. The overall loss

is defined as follows:

is defined as follows:

It combines the negative-log likelihood loss for both speech translation (ST) and text translation (MT) with knowledge distillation (KD), cross-attentive regularization (CAR), Cross-Modal Decision Regularization (CMDR), and differential average lagging (DAL) loss.

( : speech/text model parameters)

: speech/text model parameters)

Experiments

We use MuSTC and WMT datasets for our experiments. Detailed statistics are provided in Table 1.

Table 1. Dataset statistics (# - Number of)

The model is first trained for 150 epochs (110 hours / 4.5 days) without the differentiable average lagging (DAL) latency loss by setting λ=0 in loss equation. These models are referred to as λ0Finally, the models are finetuned further for 50 epochs (40 hours) after adding the DAL loss. As mentioned earlier, we use a number of existing approaches to build a better baseline. These include data augmentation, knowledge distillation and cross-attentive regularization. The results corresponding to all the approaches for the λ0 models are given in Table 2.

Table 2. Performance of various approaches: λ0 models

Next, we look at the latency-quality curves corresponding to different models in Table 2.

As seen in figure 2 the existing approaches we use improve the performance of MMA, and build a stronger baseline. Further, Figure 3 shows that the proposed CMDR provides consistent improvements across different latency regimes.

Conclusion

In this work, we leverage the simultaneous text translation task to improve the performance of simultaneous speech translation system. Various techniques from the offline speech translation domain, such as online knowledge distillation and cross-attentive regularization are found to be beneficial for the simultaneous speech translation task. To improve the performance further, we also propose Cross-Modal Decision Regularization. It improves the read/write decision policy for simultaneous speech translation by using the monotonic attention energies of the simultaneous text translation model. This work improves the performance of MMA-based simultaneous speech translation by 35% or 4.5 BLEU points across different latency regimes.

Link to the paper

https://www.isca-speech.org/archive/pdfs/interspeech_2022/zaidi22_interspeech.pdfReferences

[1]. S. Indurthi*, M. A. Zaidi*, N. Kumar Lakumarapu, B. Lee, H. Han, S. Ahn, S. Kim, C. Kim, and I. Hwang, “Task aware multi-task learning for speech to text tasks,” in ICASSP 2021 - 2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2021, pp. 7723–7727

[2]. X. Ma, J. Pino, and P. Koehn, “Simulmt to simulst: Adapting simultaneous text translation to end-to-end simultaneous speech translation,” arXiv preprint arXiv:2011.02048, 2020

[3]. S. R. Indurthi, M. A. Zaidi, B. Lee, N. K. Lakumarapu, and S. Kim, “Language model augmented monotonic attention for simultaneous translation,” in Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Seattle, United States: Association for Computational Linguistics, Jul. 2022, pp. 38–45. [Online]. Available: https://aclanthology.org/2022.naacl-main

[4]. Y. Tang, J. Pino, X. Li, C. Wang, and D. Genzel, “Improving speech translation by understanding and learning from the auxiliary text translation task,” arXiv preprint arXiv:2107.05782, 2021

[5]. C. Cherry and G. Foster, “Thinking slow about latency evaluation for simultaneous machine translation,” arXiv preprint arXiv:1906.00048, 2019.