AI

Feature Kernel Distillation

We study the significance of Feature Learning in Neural Networks (NNs) for Knowledge Distillation (KD), a popular technique to improve an NN model’s generalisation performance using a teacher NN model. We propose a principled framework Feature Kernel Distillation (FKD), which performs distillation directly in the feature space of NNs and is therefore able to transfer knowledge across different datasets. We provide theoretical justification for FKD in a multi-view data setting, and further use insights from our theory to introduce implementation details that improve FKD in practice relative to other feature-based distillation KD approaches across various experimental settings. FKD will be published at The Tenth International Conference on Learning Representations (ICLR 2022).

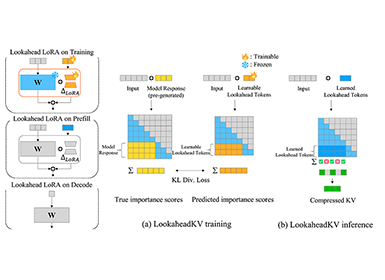

Figure 1. Feature Kernel Distillation (FKD) from the feature extractor of a teacher  to that of a student

to that of a student  .

.

Key idea: use the teacher feature kernel as the regularisation objective for distillation.

Motivation

There are many complicated properties of Neural Networks which are distinct from more classical techniques, like kernels or random features. A high-level reason for this distinction is that non-convex models like NNs are capable of feature learning, where the model flexibly adapts its internal representations of data by using the available training data. On the other hand, convex models like kernels or random features can be viewed as feature selection methods, where representations of data are fixed. Knowledge Distillation (Bucilua et al., 2006), where we improve a student’s performance using “dark knowledge” from a teacher model, is one example of perplexing NN behaviour which is not found in feature selection methods, and has been widely adopted in deep learning.

The original vanilla KD approach for NNs (Hinton et al., 2015) distils knowledge from the teacher by training the student on a loss that regularises the student  towards the teacher

towards the teacher  in prediction space by

in prediction space by

It’s been observed that KD can dramatically improve a student NN’s performance, and even allow a student to outperform its teacher (Furnalello et al., 2018; Zhang et al., 2019). To us, the success of KD in NNs is hugely surprising in the first place! Given that we are not changing the student model space at all, the student clearly has enough capacity to perform well. Thus, in order to make sense of KD, we must consider the optimisation procedure of a student model.

Allen-Zhu and Li (2020) provide the first theoretical exposition for the mechanisms of how KD improves generalisation in NNs. However, their analysis is restricted to the vanilla KD formulation in Eq. (1). We note that vanilla KD requires shared prediction spaces between student and teacher, such that  and

and  lie in the same space. For example, one cannot apply vanilla KD across when teacher and student are trained on related image datasets with different numbers of classes e.g. CIFAR-100 and CIFAR-10. With this in mind, our goal with FKD was to design a theoretically principled distillation method that is agnostic to the teacher and student prediction spaces.

lie in the same space. For example, one cannot apply vanilla KD across when teacher and student are trained on related image datasets with different numbers of classes e.g. CIFAR-100 and CIFAR-10. With this in mind, our goal with FKD was to design a theoretically principled distillation method that is agnostic to the teacher and student prediction spaces.

Our solution: Feature Kernel Distillation

To achieve our aim, we turn to NN feature space and take the perspective of NNs as data-dependent kernel machines using the Feature Kernel  , which is the kernel induced by the inner product of feature representations:

, which is the kernel induced by the inner product of feature representations:

Feature Kernel. Suppose we have a Neural Network  , with NN feature extractor

, with NN feature extractor  that is fed into a linear layer

that is fed into a linear layer  . For two inputs

. For two inputs  , the feature kernel

, the feature kernel  is the kernel defined by the inner product of

is the kernel defined by the inner product of  and

and  , that is,

, that is,  .

.

Our main idea in FKD is to treat the teacher’s feature kernel  as the key distillation target towards which we regularise the student’s feature kernel

as the key distillation target towards which we regularise the student’s feature kernel  , as shown in Fig. 1. This yields our FKD loss function:

, as shown in Fig. 1. This yields our FKD loss function:

We will now try to understand why the feature kernel is a sensible target for distillation. First, note that feature learning in NNs can be thought of as equivalent to NN feature kernel learning (Yang and Hu, 2020). More concretely, to see the importance of the feature kernel, a simple thought experiment is that retraining only the last layer  on squared error loss is exactly kernel regression with the feature kernel

on squared error loss is exactly kernel regression with the feature kernel  : that is to say, the feature kernel completely determines the predicted NN!

: that is to say, the feature kernel completely determines the predicted NN!

We further lend credence to the importance of the feature kernel in Fig. 2, where CIFAR-10 test predictions of a ResNet20 trained with cross-entropy (He et al. 2016) are compared to a model with: 1) retrained last layer  (hence sharing feature kernel), and 2) independent parameter initialisation

(hence sharing feature kernel), and 2) independent parameter initialisation  in

in  .

.

We see that there is significantly more predictive agreement in the two models that share a feature kernel (left), compared to two models that were trained from independent initialisations (right). This finding indicates that: a) different initialisations bias the same architecture to learn different features, and b) the feature kernel (largely) determines a model’s test predictions.

Thus, our intuition in FKD is that different models with independent parameter initialisations will have learnt different features, and that the student can learn previously unlearnt features by comparing feature kernels to the teacher via our regularized loss in Eq. (2). Now, let’s formalise our intuitions.

Figure 2. CIFAR-10 test prediction confusion matrices between a fixed reference model and a model with: (left) retrained last layer, and (right) independent parameter initialisation.

Theoretical Analysis for FKD

To provide theoretical justification for FKD, we extend the framework of Allen-Zhu and Li (2020), which only considers vanilla KD. Our main theoretical result is outlined informally in the following theorem:

Theorem (Informal). There exists a classification setting and a single hidden-layer student NN architecture where standard gradient-based supervised training fails to generalise, but FKD with:

I. same-architecture, teacher improves student generalisation (self-distillation),

II. an ensemble of teachers, the more teachers the better the student generalises.

As FKD distils knowledge by comparing pairs of data, whereas vanilla KD compares a single data point across classes, this core difference is reflected throughout our theoretical analysis relative to Allen-Zhu and Li (2020). Below, we give a brief outline of how our proof works intuitively.

Proof intuition: We start off by considering how an NN’s feature kernel depends on its random parameter initialisation. Specifically, in a multi-view classification setting where each class has multiple identifying views or features, we prove that a trained model’s feature kernel is highly dependent on its random parameter initialisation. We show that the NN’s initialisation biases it to only learn a subset of all available features, in that the NN’s feature kernel recognises that similar input pairs  share a feature (by taking a large feature kernel value

share a feature (by taking a large feature kernel value  , which acts as a similarity metric) only if said feature lies in the subset determined by the NN’s initialisation.

, which acts as a similarity metric) only if said feature lies in the subset determined by the NN’s initialisation.

Next, we show that an ensemble model’s feature kernel learns the union of all features that the ensemble members have individually learnt. If the ensemble members were initialised independently, then this means that the ensemble teacher becomes more powerful as it grows in size. Finally, for any feature  that the teacher model has learnt but the student hasn’t, FKD’s regularisation allows the student to learn

that the teacher model has learnt but the student hasn’t, FKD’s regularisation allows the student to learn  by realising that input pairs

by realising that input pairs  sharing feature v are indeed similar and should have large feature kernel value

sharing feature v are indeed similar and should have large feature kernel value  . Thus, the more features the teacher has learnt (e.g. if we have an ensemble teacher), the more features the student will learn with FKD.

. Thus, the more features the teacher has learnt (e.g. if we have an ensemble teacher), the more features the student will learn with FKD.

Toy Example: Fig. 3 allows us to visualise the intuition for our theory better. Suppose we have a car class which may be identified by window or headlight features (left), but that the student model has only learnt to recognise the headlight feature to classify cars. Without distillation, the student won’t know that the two cars in Fig. 3 are similar, and moreover won’t generalise to the side-on car image with hidden headlines (right). However, if the teacher model has learnt the window feature, then FKD regularisation encourages the student to realise that the two car images in Fig. 3 are related, thereby learning the window feature and improving the student’s generalisation.

Figure 3. Toy visualisation of the multi-view data setting.

FKD in Practice

Like FKD, previous works have considered notions of “similarity” or “relation” between data-points to distil knowledge from a teacher NN model (Park et al. 2019; Tung and Mori 2019). We use our theory to motivate practical implementation details that allow FKD to outperform these previous approaches. Namely, we propose to use: i) feature correlation kernels as we want to learn features that are shared between datapoints only, and ii) feature regularisation in order to control the size of our feature extractor  and kernel

and kernel  . We conduct ablations in our experiments to verify that our implementation details do help to improve FKD in practice. In Alg. 1, we provide PyTorch-style (Paszke et al., 2019) pseudocode for our FKD implementation.

. We conduct ablations in our experiments to verify that our implementation details do help to improve FKD in practice. In Alg. 1, we provide PyTorch-style (Paszke et al., 2019) pseudocode for our FKD implementation.

Algorithm 1. PyTorch-style pseudocode for FKD.

Experiments

In our paper, we show that FKD outperforms related methods and matches more sophisticated approaches (Tian et al, 2020) across a variety of distillation settings and applications. To keep things concise, we will only present a few highlights here:

Ensemble Distillation

Fig. 4 verifies our theory, which suggests that larger ensemble teacher size, E, further improves FKD student performance. In this setting, we use VGG8 (Simonyan and Zisserman, 2014) for all student & teacher networks on the CIFAR-100 dataset. In Fig. 4, we also plot the test accuracy of the ensemble teacher of size E, whose predictive probabilities are averaged over individual teachers, as well as the test accuracy of an undistilled student model. We see that FKD (purple) consistently outperforms vanilla KD (green), and both distillation methods outperform the teacher in the ‘self-distillation’ setting of E=1 (Furlanello et al., 2018; Zhang et al., 2019). Moreover, FKD allows a single student to match the teacher when E=2, before positive but diminishing returns with larger E relative to the teacher ensemble.

Figure 4. FKD as teacher ensemble size changes. Error bars denote 95% confidence for mean of 10 runs.

Dataset Transfer

Table 1. Transfer distillation test accuracies (%) where teacher and student models are trained on different datasets. RKD (Park et al. 2019) and SP (Tung and Mori 2019) are previous feature-based distillation approaches. C-10, C-100 and Tiny-I are shorthand for CIFAR-10, CIFAR-100 and Tiny-ImageNet respectively. Error bars denote 95% confidence for mean of 10 runs.

Recall that one motivation for FKD was to provide a principled distillation method that is agnostic to the teacher and student prediction spaces, unlike vanilla KD (Hinton et al. 2015). This is especially pertinent when we wish to transfer knowledge across datasets, which is the setting of Table 1. From a fixed VGG13 teacher network trained on CIFAR-100, we distil to student VGG8 NNs on CIFAR-10, STL-10 & Tiny-ImageNet. As no student dataset has 100 classes, unlike CIFAR-100, it is not clear how one can use vanilla KD (Hinton et al., 2015) in this case. We thus compare FKD to other feature kernel based KD methods: Relational KD (RKD) (Park et al., 2019) & Similarity-Preserving (SP) KD (Tung and Mori, 2019). In Table 1, we see that across transferred datasets, FKD significantly outperforms an undistilled student as well as related methods.

Comparison on CIFAR-100 and ImageNet

Finally, we compare FKD to various knowledge distillation baselines on larger-scale settings of CIFAR-100 and ImageNet, across a selection of teacher/student architectures. Table 2 shows us that FKD consistently outperforms: vanilla KD (Hinton et al., 2015), RKD (Park et al., 2019), and SP (Tung and Mori, 2019). Moreover, FKD either matches or outperforms the high performing Contrastive Representational Distillation (Tian et al., 2020).

Table 2. CIFAR-100 and ImageNet-1K accuracies (%) comparing FKD with KD baselines. Error bars denote 95% confidence for the mean over 5 students.

Conclusion

Although deep learning has greatly advanced our ability to process large-scale complex data, there are still many poorly understood phenomena regarding Neural Networks (NNs) and hence a dearth of principled approaches for maximising NN performance. Our proposed Feature Kernel Distillation (FKD) provides a theoretically justified distillation method that is agnostic to prediction space, motivated by the importance of feature learning in NNs. As suggested by our experimental analysis, we hope that FKD can be utilised in practice to enhance the capabilities of deep learning applied across different walks of life.

Publication

Our paper “Feature Kernel Distillation” will appear at The 10th International Conference on Learning Representations (ICLR), 2022.

Link to the paper

https://openreview.net/pdf?id=tBIQEvApZK5

References

Zeyuan Allen-Zhu and Yuanzhi Li. Towards understanding ensemble, knowledge distillation and self-distillation in deep learning. arXiv preprint arXiv:2012.09816, 2020.

Cristian Bucilua, Rich Caruana, and Alexandru Niculescu-Mizil. Model compression. In Proceedings of the 12th ACM SIGKDD international conference on Knowledge discovery and datamining, pp. 535–541, 2006.

Defang Chen, Jian-Ping Mei, Yuan Zhang, CanWang, ZheWang, Yan Feng, and Chun Chen. Cross-layer distillation with semantic calibration. In Proceedings of the AAAI Conference on Artificial Intelligence, pp. 7028–7036, 2021.

Tommaso Furlanello, Zachary Lipton, Michael Tschannen, Laurent Itti, and Anima Anandkumar. Born again neural networks. In International Conference on Machine Learning, pp. 1607–1616. PMLR, 2018.

He, Kaiming, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. "Deep residual learning for image recognition." In Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 770-778. 2016. Geoffrey Hinton, Oriol Vinyals, and Jeff Dean. Distilling the knowledge in a neural network. arXiv preprint arXiv:1503.02531, 2015.

Wonpyo Park, Dongju Kim, Yan Lu, and Minsu Cho. Relational knowledge distillation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 3967–3976, 2019.

Paszke, Adam, et al. "Pytorch: An imperative style, high-performance deep learning library." Advances in neural information processing systems 32 (2019).

Simonyan, Karen, and Andrew Zisserman. "Very Deep Convolutional Networks for Large-Scale Image Recognition." arXiv preprint arXiv:1409.1556 (2014).

Yonglong Tian, Dilip Krishnan, and Phillip Isola. Contrastive representation distillation. In International Conference on Learning Representations, 2020.

Frederick Tung and Greg Mori. Similarity-preserving knowledge distillation. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 1365–1374, 2019.

Greg Yang and Edward J Hu. Feature learning in infinite-width neural networks. arXiv preprint arXiv:2011.14522, 2020.

Zhang, Linfeng, Jiebo Song, Anni Gao, Jingwei Chen, Chenglong Bao, and Kaisheng Ma. "Be your own teacher: Improve the performance of convolutional neural networks via self distillation." In Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 3713-3722. 2019.