Communications

Role of Standards in AI for Wireless

Introduction

We are living in the era of voice assistants who understand what we speak and chat-bots who engage us in textual conversations. In the areas like natural language processing and computer vision, AI has made great strides and the progress is visible in our daily lives.

Possibly it was intuitive to imagine the role of AI in mimicking the intelligent functions of human beings like vision, speech, and reading. Pertinent question being asked of late is whether AI has a role in Communication networks like the 4G and 5G? Extending the logic that AI can mimic an equivalent human function, we can easily foresee that manual functions like network maintenance, network health monitoring, fault detection and recovery in communication networks are all straight forward candidates for AI.

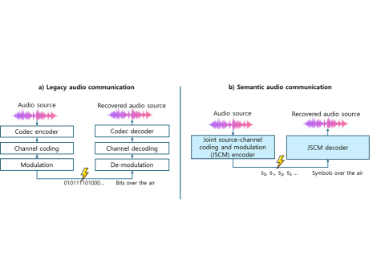

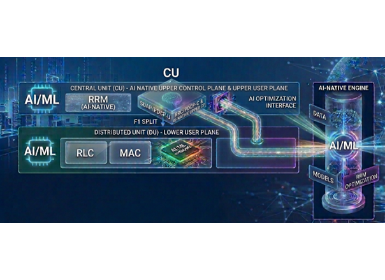

However it is difficult to imagine the role for AI in the wireless communication domain as it is remotely far from any routine human function. Wireless radio transmitters and receivers (those we find in mobile handsets, cellular towers, fixed wireless terminals etc) are in fact a set of elegant mathematical functions built into hardware and firmware. These mathematical functions make information exchange over air-waves possible between terminals and network in diverse environments (Fig.1). These functions, which go by names like channel coding, source coding, channel estimation, channel equalization, power control, are bound by very rigid protocols pre-defined and agreed by all industry stakeholders. These protocols go by the names like MAC (Medium Access Control), RRC (Radio Resource Control), MM (Mobility Management) and SM (Session Management) (Fig.2).

Figure 1. Example Transmit and Receive functions that enable Wireless Communication

Wireless systems from 1G to 5G are built successfully with the help of these functions, protocols and are widely successful. Standard bodies like 3GPP and IEEE are successful in bringing the industry stakeholders together and in specifying the functions and protocols such that any network or terminal implementing those standards are all inter-operable. (Specifying something means standardizing it and are interchangeably used).

Standards capture the mutually agreed “who, what, when, how” (let us refer it as W3H standardization hereafter) of every function in the communication system i.e. which entity should do what at any given time. The exact implementation of a specific function is usually left to vendors as long as they adhere to the agreed W3H. The minimum performance expected from each vendor implementation can optionally be “specified” by the standards bodies and those must be met where specified. This may sound quite restrictive but this is what ensured truly interoperable deployments across the world complying with the global and local regulations. Traditionally companies develop proprietary IP such that their implementations beat the minimum performance requirements imposed by the standards.

Figure 2. Example Protocols in UE and Network that enable Wireless Communication

With this background, the questions this article is trying to address are, whether we need AI in wireless communication and to what extent should its usage be standardized.

Let us first establish the purpose of AI in Wireless communication. What are we trying to optimize/fix/solve with AI?

Motivation for AI-in-Wireless

The two dimensions which are in need of constant innovation are:

(1) Spectral efficiency: All wireless communication is built on the shared and moderated/contested use of a limited resource called spectrum. The licensable spectrum used to build reliable communication systems is always in shortage and hence is very precious. So any technique that can further optimize the utilization of this shared resource will definitely be welcomed.

(2) Energy efficiency: Terminals and networks are guzzling enormous energy in negotiating the use of the limited resources in the system. Energy can be in terms of the computational power or storage space needed to run the system. Any technology that can minimize the memory or processing required to operate the system will be of great benefit to all.

Existing mathematical model based communication systems were ensuring reasonably good spectral and energy efficiencies which is why we had a successful evolution from 1G to 5G. However as the communication systems are becoming complex and distributed, it will become increasingly difficult to fit all the system variables and their nonlinearities into a mathematical function and expect it to behave optimally in all deployment conditions. Thus there are reasonable grounds to believe that application of AI will assimilate this complexity better and will help improve either spectral efficiency or energy efficiency.

Which specific functional block and what specific protocol in the system can benefit from AI is a topic of active research world-wide and is not a settled case. It is possible that some traditional functions and protocols retain the edge over their AI equivalent peers whereas some other functions and protocols may get quite simplified by applying AI assistance. It will take a few years of research to identify the appropriate usage of AI in wireless terminals and networks.

So for the moment let us assume AI will indeed improve the spectral efficiency or energy efficiency of at least some functions and protocols and hence is a welcome addition.

Role of Standards

Now let us come back to the question of whether each specific application of AI in wireless communication needs to be standardized and if yes how much of it has to be specified.

Ground Rules:

Given the motivations for AI-in-Wireless, let me define some ground rules that help in formulating the role and extent of standards while incorporating AI.

1. Application of AI can be agreed for one of the following purposes:

- Improve the spectral efficiency of a function or a protocol in the existing system over the conventional mechanism, by optionally trading-off with energy efficiency.

- Improve the energy efficiency of a function or process whilst ensuring at least similar spectral efficiency as that of the conventional mechanism.

2. In general it should be ensured that the improvement in a function does not come at the expense of some other function. For example, a Mobile handset can employ AI to secure better network experience as long as it is not harming another handset’s fair prospects.

3. It should be possible to trade-off the performance of a lower impact function to improve the performance of a higher impact function. For example, a low-power sensor might be allowed to save energy consumption even if that increases the energy drain for another entity which is not power limited.

Following the above ground rules, once an AI application has been identified, next consideration shall to what extent it should be standardized.

Case: Allow Proprietary Implementations:

If the AI application at an entity (say a handset or a network node) neither harms the fair prospects of other entities nor does it impose additional power/performance costs on other entities. Such applications should be left as vendor implementation specific. As long as the minimum performance requirements set in the standards are met, vendors need not even disclose the nature of their implementation, AI or otherwise. Businesses would like to use AI to differentiate their offerings over others’ and hence too much of rigidity in the standards over AI will stifle their innovation.

Case: Mandate W3H standardization:

On the other hand, there will be AI applications running in tandem across diverse entities. In such cases, the entities involved need to exchange relevant information between them. For example if a network is optimizing the neighbour cell measurements of terminals using ML, if the terminal is aware of it, it can notify the network in case it detects any anomalies. Hence for such cases, it is important to standardize the signalling exchanges between the parties. The signalling should enable each party to convey its usage or non-usage of AI for a specific function and any “meta-data” that can be shared to ensure mutual benefit.

In case of anomalies, entities should be able to request the other party to disable AI mechanism and fall back to conventional mechanism. This may imply that until a desired level of performance and reliability is reached, a device should support both the legacy mechanism of handling as well as the ML way of handling. That can be an unreasonable expectation, especially for memory and power constrained devices. Hence there should be a means for those devices to download new models from remote servers. In such case, parties should be able to reset the AI/ML model states on the other party to fall back to a known state.

Need for Standardized Data Sets:

Deep learning models to be trained off-line for great accuracy before they can be enabled in a live system. There should be standardized training vectors and test vectors (either made available globally through 3GPP or by each telecom operator) which can be used to ensure that a minimum common performance benchmark is met before allowing a model to be enabled in a live system. Without this common verifiable performance benchmarks, inferior ML models may flood the network harming the entire system.

Conclusion:

AI/ML enthusiasts may wonder if AI can survive in the “standardized world” of wireless communication. At the end of the day, spectrum and energy are two precious resources. So AI based innovation should be allowed to help optimize their usage. However its success will be ensured by making it attractive enough for proprietary innovations while regulating it to ensure the interoperability and the service guarantees subscribed to by the users. It is a long journey ahead and by starting on a strong foundation with a concrete framework, AI adaptation can grow robustly in the wireless world.

Disclaimer:

The views and opinions expressed in this article are solely those of the author. These views and opinions do not necessarily represent those of Samsung Research and its affiliates.