Communications

From Digital Twin to Live Network: Sim2Real for Network Optimization

1. Introduction

AI-based network optimization depends fundamentally on data. In many AI (Artificial Intelligence) problems, good performance can be achieved by collecting enough samples over the operating range of the target system. In commercial mobile networks, however, this is not easy. Because a live network continuously serves users, its network parameters – namely CM (Configuration Management) - cannot be explored broadly for the sole purpose of data collection. As a result, most commercial data is gathered within a narrow range of safe operating conditions.

This creates a fundamental challenge for AI-based parameter optimization. A model trained only on commercial data may perform well within the region it has already observed, yet remain unreliable when asked to evaluate new CM candidates outside that region. This is especially problematic in network optimization, where the goal is not only to predict KPI (Key Performance Indicator) under known conditions, but also to compare candidate control actions and identify better configurations.

A Digital Twin offers a practical way to overcome this limitation. By providing a controllable network environment, it enables broader exploration of CM-response patterns than is possible in live operation. This makes it possible to generate synthetic data that complements the narrow coverage of commercial observations. At the same time, the Digital Twin alone is not sufficient, because the Digital Twin cannot exactly reproduce every aspect of a commercial network.

For this reason, our framework combines Digital Twin-based data generation with Sim2Real transfer. The Digital Twin is used to learn broad control-response behavior, while commercial data is used to adapt that knowledge to the characteristics of the real network.

This blog will present the framework in five steps. It begins by explaining why commercial data alone is insufficient for AI-based parameter optimization, then describes how a Digital Twin can generate controllable synthetic data over a broader operating space and how Sim2Real transfer can make that knowledge useful in the commercial environment. Finally, it summarizes field-trial results from deployment in a live network and discusses their implications for future AI-based network operation.

2. Why Sim2Real?

For AI-based network optimization, the learning target is not simply a static mapping from input to output. What the model must learn is a response rule: how KPI changes when CM changes under a given network environment. This makes the problem fundamentally different from ordinary prediction tasks, where future inputs are often assumed to stay within the same distribution as the training dataset. In network optimization, by contrast, the model is frequently asked to evaluate CM candidates that are only sparsely represented—or not represented at all—in commercial data. As a result, model quality depends not only on interpolation within the observed region, but also on learning how KPI responds to CM changes across a sufficiently broad range of conditions.

Commercial-only AI modeling is often insufficient for this purpose. In a live network, CM exploration is inherently restricted because operators cannot freely change network parameters solely for training purposes, as even small changes may affect service quality or operational stability. As a result, most commercial data is collected around a default policy or a narrow set of validated configurations. In addition, KPI in commercial networks is influenced by many external factors, such as radio conditions, traffic load, mobility, and interference. When CM variation is narrow but environmental variation is large, it becomes difficult to isolate the true effect of CM on KPI. As a result, a model trained only on commercial observations may learn correlations within the recorded operating region, but it cannot reliably infer the effect of unseen CM values under a given network environment.

Because of this, commercial-only learning faces a structural limitation. It may capture correlations within the recorded operating region, but it cannot reliably predict the effect of unseen network parameter values under a given network environment. If such a model is used directly for optimization, the CM recommendation step becomes weak, because the model cannot confidently judge whether a new CM candidate will improve or degrade KPI. This is why learning from commercial data alone is often insufficient for practical network control.

A Digital Twin addresses this limitation by expanding the range of observable CM-response pairs. Within a simulator, CM can be varied systematically under controlled conditions, and the resulting network performances and KPI responses can be collected at scale. This allows the training set to include combinations that are absent in commercial data and provides controlled diversity over the action space. In that sense, the Digital Twin helps the model learn how KPI responds when CM changes, rather than merely fitting the narrow operating region recorded in the live network.

Still, broader synthetic coverage is not the final goal. The final goal is deployment in the commercial network, and this introduces a second challenge: domain gap. Even when a Digital Twin is carefully designed and calibrated with commercial inputs, the generated data does not exactly match live-network observations. Differences in value distribution, calibration error, implementation details, and scenario composition remain unavoidable. A model trained only on synthetic data may therefore perform well in simulation while failing to generalize reliably in the field.

This is where Sim2Real becomes essential. The key question is not whether synthetic data can perfectly reproduce commercial data, but whether the simulator captures useful response behavior that remains valid after alignment. In our framework, the main assumption is that under similar state conditions, the direction and relative pattern of KPI change induced by CM still contain transferable information, even when the absolute values differ between the Digital Twin and the commercial network. Simulation is therefore used to learn how CM influences KPI, while commercial data is used to adapt that learned behavior to real operating characteristics.

Based on this idea, our framework combines two connected components. The first is a Digital Twin that generates broad CM-response coverage beyond what can be safely explored in commercial operation. The second is a Sim2Real transfer process that preserves the useful control behavior learned in simulation while updating the part needed for real deployment. Together, they address the two core limitations of commercial-only AI modeling: narrow CM coverage and the gap between training data and deployment conditions.

3. Network Sim2Real-Transfer

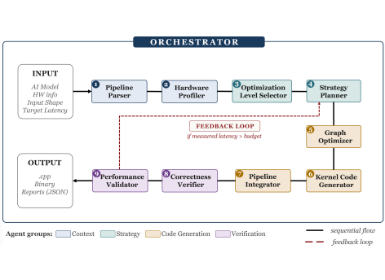

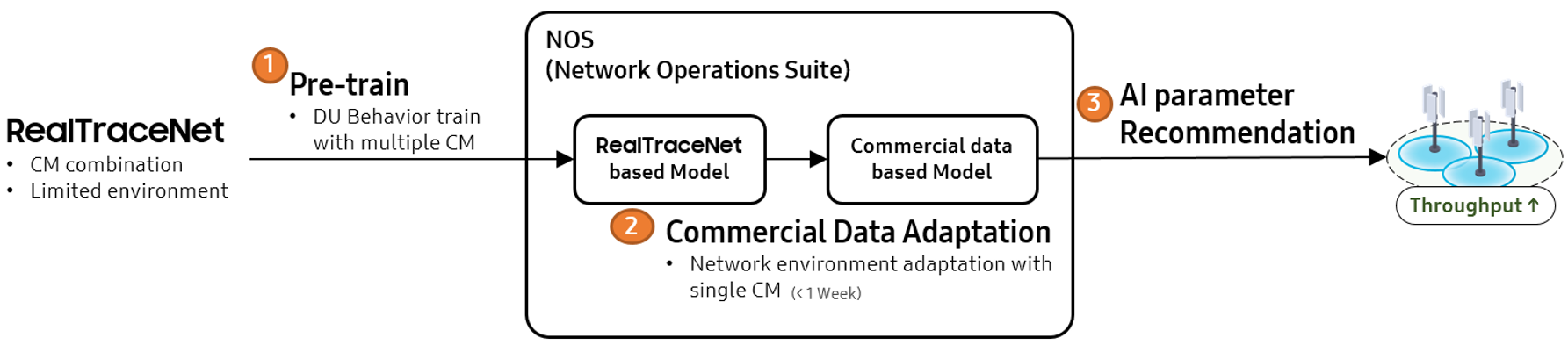

Figure 1. Network Sim2Real-Transfer flow

The Network Sim2Real-Transfer framework is built around two connected components: a Digital Twin and an AI model. As shown in Figure 1, the framework begins by selecting a target region and KPI, after which the Digital Twin generates CM-response data for across diverse network environments. This stage is designed to address the first practical challenge of commercial-network learning: the lack of broad and controllable CM coverage in live network. By systematically generating data across candidate CM settings, the Digital Twin provides the response patterns that are difficult to observe directly in the field.

The second stage is handled by the AI model, which uses this simulated data together with commercial data to perform Sim2Real transfer, learn a CM selection policy that can be applied to the commercial network. This stage addresses the second core challenge: the gap between simulated and real network behavior. Rather than using simulation output as-is, the model aligns the transferable CM-response knowledge learned in the Digital Twin with the operating characteristics of the commercial network, and then applies the recommended CM to the commercial BS (Base Station) .

3.1 Digital Twin: RealTraceNet

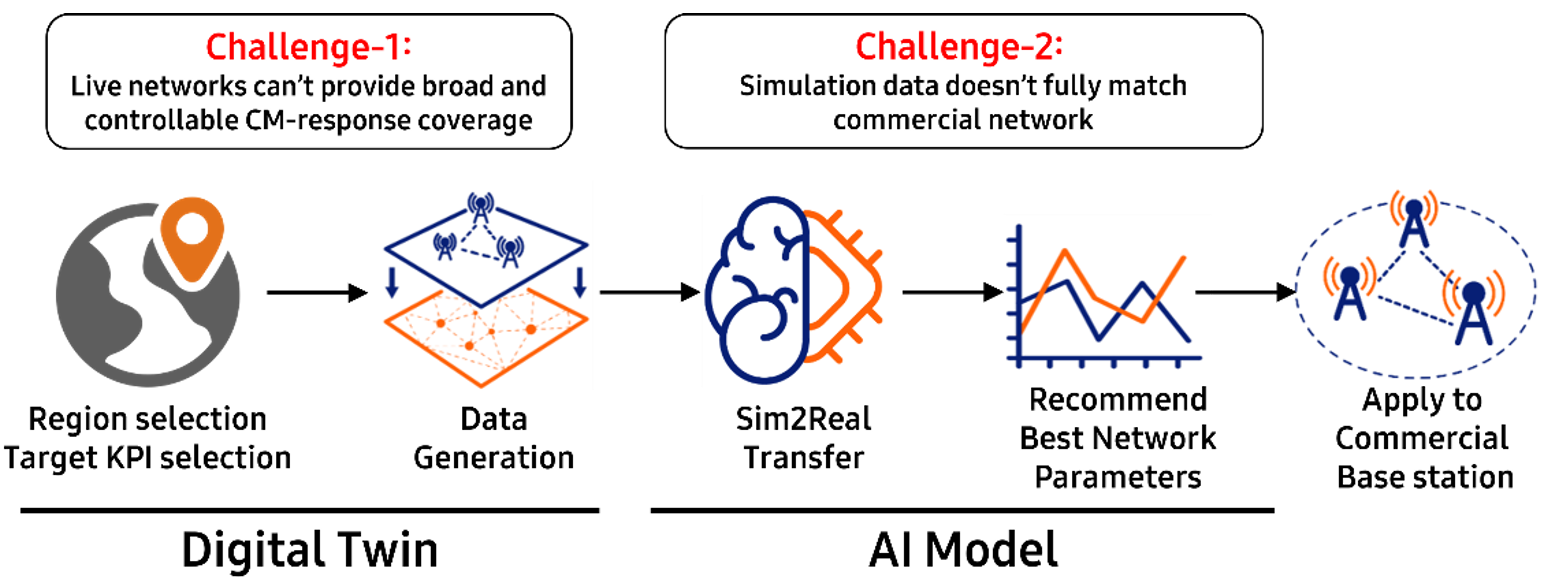

The performance of data-driven network optimization is fundamentally constrained by the diversity of training data. In commercial networks, however, collecting data for rare or extreme network environments, or through broad exploration of potentially risky CM settings, is often infeasible. To address this limitation, we develop RealTraceNet, a high-fidelity network simulator designed to generate realistic training data beyond what can be safely observed in the field. As illustrated in Fig ure 2, its fidelity comes from combining real BS equipment used as the vDU with in-house virtualized UE (User Equipment) components that are implemented to behave very similarly to commercial devices, together with a Replicator module that injects site-specific real-world characteristics into the simulator.

Figure 2. The RealTraceNet overview

The Replicator exploits contextual features from various public data sources to configure two key components for site-specific realism: the channel and UE behavior. For channel generation, RealTraceNet adopts a stochastic model based on 3GPP TR 38.901 [1], which enables fast large-scale data generation while preserving standardized channel structure. Because the standardized scenarios are still too limited to fully represent a target site, we estimate site-specific channel parameters through virtual soundings. The parameters such as path gain ($g$), time delay ($τ$), and angles of arrival/departure ($ϕ$,$η$) are estimated as follows:

where $ \mathcal S$ represents statistical aggregation process. $ \Psi_l$ indicates observed individual wave radiation during a sounding, which determined by nearby material properties (e.g., permittivity, permeability, conductivity, edge geometry, and roughness). We adopt NVIDIA Sionna RT (Ray-Tracing) [2], a differentiable ray-tracing simulator that reflects real-world geography as a virtual sounder. In addition, geographic reliability is improved not only with mobile-device logs such as SINR (Signal to Interference plus Noise Ratio), which capture the effect of real propagation and radiation patterns, but also with commercial BS operational data, so that the virtual environment can be calibrated more consistently to commercial-network observations. Material properties in the virtual sounder are then tuned until the simulated results closely approximate the target data.

The Replicator also generates diverse and realistic UE mobility and traffic behavior. For UE mobility and traffic behavior, it employs a Conditional VAE (Variational Autoencoder) [3] that takes contextual features such as geography, population, and area characteristics as input. The generator is trained in a closed loop, where the error between simulated network data and commercial network data is backpropagated to refine the generated behavior until its network-level impact becomes realistic and verifiable. In parallel, site-specific data traffic patterns are predicted from publicly available open-source data, allowing the simulator to reflect not only where users move but also how traffic demand evolves across the target environment. As a result, RealTraceNet learns UE behavior patterns suited to each site and produces network data that is both diverse and operationally credible.

In practice, RealTraceNet functions as an advanced offline data-generation and augmentation engine that improves model generalization to environments where collecting sufficient training data is difficult. By reproducing site-specific network conditions in advance, the simulator expands the range of CM-response patterns available for training and evaluation beyond what can be safely observed in commercial operation. This makes it possible to assess potentially risky CM candidates in a controlled and repeatable setting before applying them to the live network.

3.2 AI Model: Sim2Real Transfer

3.2.1 AI Model Design

The AI model in our framework is designed for two connected tasks: KPI prediction and CM optimization [4]. KPI prediction estimates the network outcome for a given combination of network state and CM, while CM optimization uses that predictive capability to search for a better CM candidate [5]. Although these tasks appear different, they share the same core requirement: the model must understand how KPI changes when CM changes under a given network state.

This requirement cannot be fully satisfied by either commercial data or synthetic data alone. A model trained only on commercial data struggles because the observed CM space is narrow and uneven. A model trained only on synthetic data struggles for a different reason: the deployment domain is not identical to the simulation domain. The challenge, therefore, is not simply to combine two datasets, but to learn from both without losing the distinct knowledge that each one provides [6].

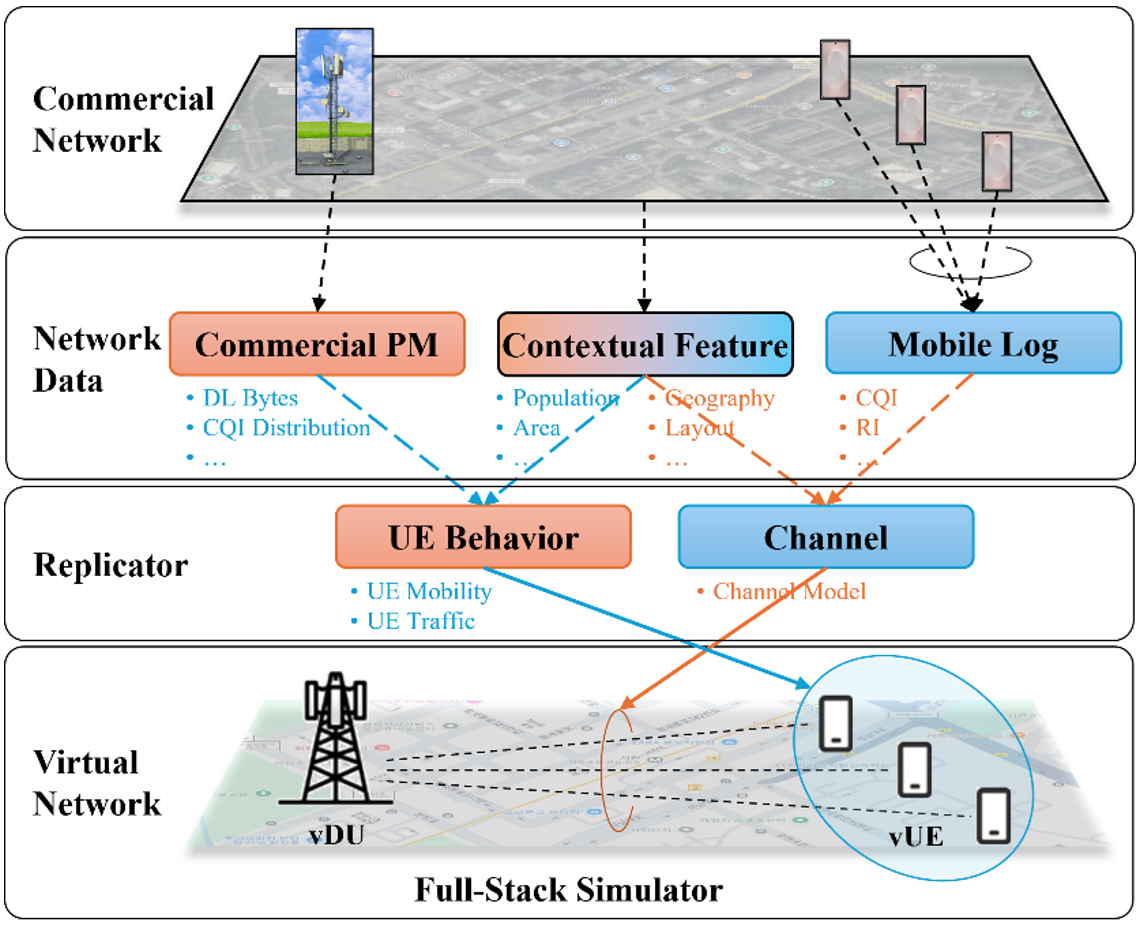

To address this, our framework separates the learning target into two complementary parts. The first part is CM-induced network behavior—the response rule that describes how KPI and PM change when CM is modified. The second part is the operating environment characteristics of the real network—the state-dependent properties that cannot be fully reproduced in simulation and must be learned from real observations. As illustrated in Figure 3, the key idea is to learn broad control-response behavior from the Digital Twin while using commercial data to anchor that behavior to the real deployment domain.

Figure 3. AI Model workflow

The Digital Twin is used mainly for the first part, since its broad CM exploration gives the model access to CM-response cases that cannot be safely observed in live network. In practice, RealTraceNet serves as an offline data-generation engine that allows potentially risky CM candidates to be evaluated before deployment. Commercial data, by contrast, is used primarily for the second part, since only the live network contains the true operating distribution faced at deployment. Its value lies not in broad CM coverage, but in capturing the environment-specific characteristics needed to make simulation-learned response rules valid in practice.

This training logic is fundamentally different from simple fine-tuning. In a standard fine-tuning pipeline, a model is first trained on synthetic data and then adjusted with real data. While this often improves prediction accuracy within the observed commercial region, it also tends to pull the model toward the narrow real-data distribution and weaken the broader control knowledge learned from simulation [7]. In other words, fine-tuning improves local fit but does not necessarily preserve the simulation-derived rule needed for unseen CM candidates.

Our Sim2Real approach is designed to avoid this failure mode. Rather than replacing simulation-learned knowledge with real-data fitting, it preserves the CM-response behavior learned from the Digital Twin and updates only the part needed to align the model with the commercial environment [8]. The point is not to memorize the region covered by commercial observations, but to adapt the simulation-learned control behavior so that it becomes usable in the real network domain [9,10].

3.2.2 AI Model Evaluation

We evaluated the proposed Sim2Real framework through an offline prediction experiment, with the goal of verifying whether CM-response behavior learned from the Digital Twin could be transferred effectively to the commercial network. To do this, the model was first trained using 100,000 samples generated by the Digital Twin, so that it could learn broad response behavior over a range that could not be safely explored in live operation. It was then adapted using 100,000 samples collected from the commercial network, allowing the learned behavior to be aligned with the actual operating characteristics of the target deployment environment.

This Sim2Real framework becomes important when the synthetic and real domains are far apart. In our case, the similarity between Digital Twin data and commercial data was only about 39%, indicating a substantial domain gap. This means that a model trained on synthetic data alone cannot be trusted directly in the commercial network [11]. However, it does not mean that simulation is useless. The low similarity indicates that raw value alignment is weak, but the transfer result shows that useful control behavior still exists inside the synthetic data.

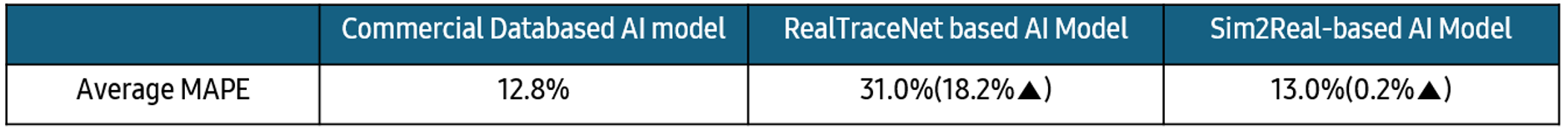

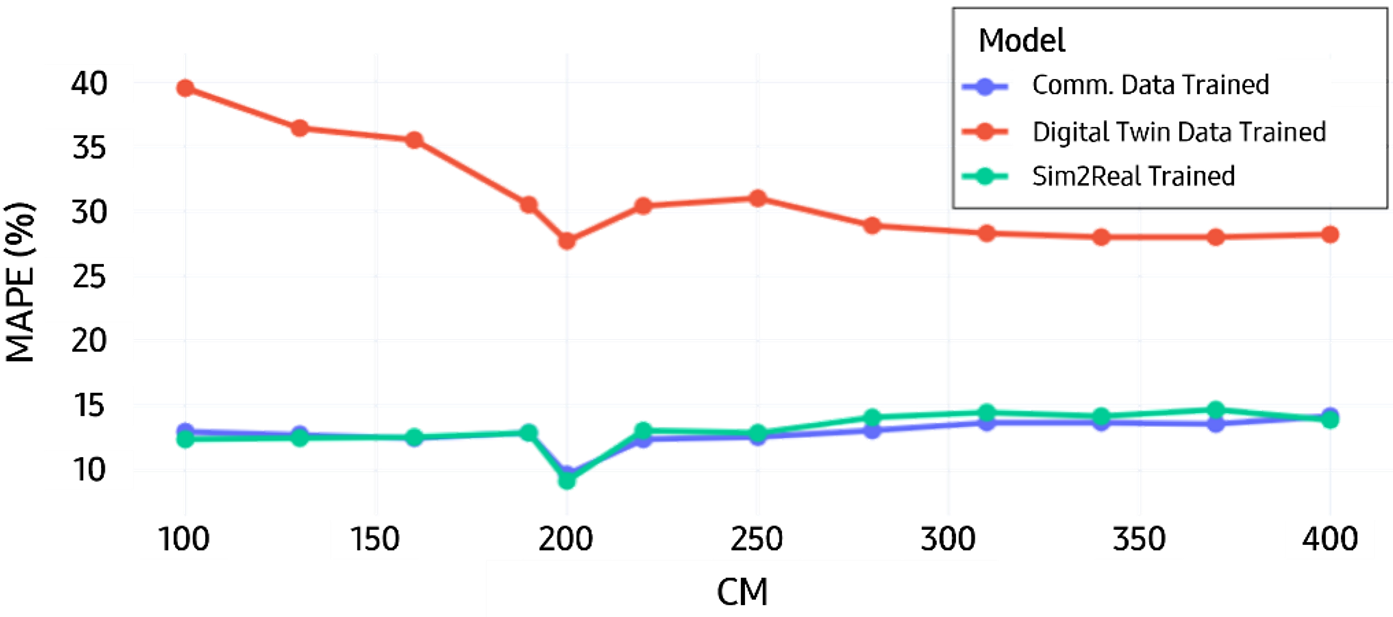

The offline evaluation supports this interpretation. As summarized in Table 1 , the model trained only on commercial data achieved an average MAPE (Mean Absolute Percentage Error) of 12.8%, whereas the model trained only on Digital Twin data showed much poorer performance with a MAPE of 31.0%. By contrast, the Sim2Real model achieved a MAPE of 13.0%, only 0.2 higher than the commercial-data-only reference. Despite the large simulation-to-real gap, this result indicates that the proposed transfer method can recover prediction performance close to the commercial-data baseline.

Table 1. Comparison of AI Model’s accuracy

Figure 4 provides a complementary view by showing prediction performance across CM changes. The Digital Twin-only model exhibits a clear mismatch because it does not sufficiently capture the operating characteristics of the commercial environment. The Sim2Real model, however, follows the commercial trend much more closely and remains near the performance level of the model trained only on commercial data. Together, Table 1 and Fig ure 4 suggest that the Digital Twin is useful not because it reproduces commercial data exactly, but because it provides access to control behavior that can later be adapted to the real network.

Figure 4. KPI Prediction Results

At the same time, this result should be interpreted with some care. The comparison is not fully symmetric, since the commercial-data-only model was trained using the full commercial dataset, whereas the Sim2Real model combined Digital Twin data with commercial data used mainly for adaptation. For this reason, the current result should not be read as evidence that Sim2Real is unnecessary whenever full commercial data is available. Rather, its practical value is expected to become more evident in environments where commercial data is insufficient or difficult to collect safely.

4. Field Trial

To verify that the proposed framework remains effective beyond offline evaluation, we conducted a field trial in a commercial network. The purpose of this trial was not only to confirm KPI improvement after deployment, but also to test whether control behavior learned in the Digital Twin could be transferred successfully to live network operation.

After training, the model was deployed for downlink throughput optimization at BSs in the target area. Unlike offline evaluation, this stage tested the model under actual commercial operating conditions, where traffic load, interference, and user behavior continuously affect network performance. This is important because the practical value of the framework ultimately depends on whether the model can support real CM decisions in the field, rather than only under simulator assumptions.

Figure 5. Trial Regions

We conducted field trials in three areas with different regional characteristics: metro, suburban, and urban. As shown in Figure 5, the overall downlink throughput increased from 131.96 Mbps to 141.56 Mbps , corresponding to a 7.36% gain. More specifically, the throughput improved by 7.3% in the metro area, where user mobility is relatively high, by 10.5% in the urban area, where the number of active UEs is large, and by 12.5% in the suburban area, where the UE population is relatively smaller. These results show that the framework remained effective across regions with different operational characteristics, rather than being limited to a single traffic or mobility condition.

This result is particularly meaningful because the Digital Twin and commercial network were not closely matched in raw value distribution during offline analysis. If the transfer had failed, the model would likely have produced weak recommendations or selected CMs that did not improve live KPI. Instead, the field trial showed measurable gain in the target metric, indicating that the Digital Twin captured useful control behavior and that the Sim2Real stage aligned that behavior effectively with the commercial environment.

Taken together, the offline evaluations and the field-trial results support a consistent interpretation of the framework. The Digital Twin expands the observable control space beyond what can be safely explored in commercial operation, while Sim2Real makes that control knowledge usable at deployment time. In this sense, the field trial serves not merely as a separate performance report, but as a direct validation that the full framework can operate effectively in a live commercial network.

5. Conclusions and Future Works

In this blog, we presented an AI modeling framework for network optimization that combines a Digital Twin with Sim2Real transfer. The framework is motivated by a practical constraint of commercial networks: AI models require broad CM-response data, but live operation cannot provide such data freely because broad parameter exploration is limited by service risk.

Within this framework, the Digital Twin expands the observable control space by generating synthetic network data and KPI data under a wide range of CM settings. Its role is not to reproduce the commercial network exactly, but to provide controlled access to network response behavior that cannot be broadly collected in live operation. Sim2Real then addresses the remaining gap by adapting that simulation-learned behavior to the real operating environment using commercial data.

Our results show that this design is effective. In offline evaluation, the commercial-data-only model achieved a MAPE of 12.8%, while the Sim2Real model achieved 13.0%, despite the substantial gap between Digital Twin and commercial data. In the field trial, the deployed model improved downlink throughput from 131.96 Mbps to 141.56 Mbps, corresponding to a 7.36% gain in the commercial network. Together, these results indicate that useful control behavior can be learned in simulation and transferred successfully to live deployment when it is properly aligned with commercial conditions.

The main implication is that AI-based network optimization does not require synthetic data to be numerically identical to real data in order to be useful. What matters is whether the Digital Twin preserves transferable control behavior, and whether Sim2Real can make that behavior valid in the commercial domain. With this combination, network AI can be trained and deployed even when broad real-network exploration is difficult or unsafe.

As future work, this framework can be extended beyond downlink throughput optimization. The same transfer logic can be applied to other KPI groups where direct exploration is restricted, including KPIs related to call setup, retention, and inter-cell interference management. The core design remains unchanged: broad response learning in simulation, followed by alignment to the commercial environment using real observations.

References

[1] 3GPP, “Study on channel model for frequencies from 0.5 to 100 GHz,” 3rd Generation Partnership Project (3GPP), TR 38.901, 2018.

[2] J. Hoydis, F. Aït Aoudia, S. Cammerer, M. Nimier-David, N. Binder, G. Marcus, and A. Keller, “Sionna RT: Differentiable ray tracing for radio propagation modeling,” in Proc. IEEE Globecom Workshops (GC Wkshps), 2023, pp. 317–321.

[3] D. P. Kingma and M. Welling, “Auto-encoding variational Bayes,” arXiv:1312.6114, 2013.

[4] P. V. Klaine, M. A. Imran, O. Onireti, and R. D. Souza, “A survey of machine learning techniques applied to self-organizing cellular networks,” IEEE Commun. Surveys Tuts., vol. 1o, no.4, pp. 2392-2431, 2017.

[5] J. Shodamola, U. Masood, M. Manalastas, and A. Imran, “A machine learning based framework for KPI maximization in emerging networks using mobility parameters,” in Proc. IEEE Int. Black Sea Conf. Commun. Netw. (BlackSeaCom), 2020.

[6] S. J. Pan and Q. Yang, “A survey on transfer learning,” IEEE Trans. Knowl. Data Eng., vol. 22, no. 10, pp. 1345–1359, 2010.

[7] J. Kirkpatrick, R. Pascanu, N. Rabinowitz, J. Veness, G. Desjardins, A. A. Rusu, K. Milan, J. Quan, T. Ramalho, A. Grabska-Barwinska, D. Hassabis, C. Clopath, D. Kumaran, and R. Hadsell, “Overcoming catastrophic forgetting in neural networks,” in Proc. Natl. Acad. Sci. U.S.A., vol. 114, no. 13, pp. 3521–3526, 2017.

[8] Y. Ganin, E. Ustinova, H. Ajakan, P. Germain, H. Larochelle, F. Laviolette, M. Marchand, and V. Lempitsky, “Domain-adversarial training of neural networks,” J. Mach. Learn. Res., vol. 17, no. 59, pp. 1–35, 2016.

[9] W. Zhao, J. P. Queralta, and T. Westerlund, “Sim-to-real transfer in deep reinforcement learning for robotics: A survey,” in Proc. IEEE Symp. Ser. Comput. Intell. (SSCI), 2020, pp. 737–744.

[10] J. Tobin, R. Fong, A. Ray, J. Schneider, W. Zaremba, and P. Abbeel, “Domain randomization for transferring deep neural networks from simulation to the real world,” in Proc. IEEE/RSJ Int. Conf. Intell. Robots Syst. (IROS), 2017.

[11] Z. Tao, W. Xu, Y. Huang, X. Wang, and X. You, “Wireless network digital twin for 6G: Generative AI as a key enabler,” IEEE Wireless Commun., vol. 31, no. 4, pp. 24–31, 2024.