Robotics

RobotGPT: Robot Manipulation Learning from ChatGPT

1. Introduction

Large Language Models (LLMs) have showcased impressive capabilities in text generation, translation, and code synthesis. Recent efforts focus on integrating LLMs, notably ChatGPT, into robotics for tasks like zero-shot system planning [1]. Despite progress, the full extent of LLMs' potential in robotics remains unexplored. The evolving landscape of Human-Robot Interaction (HRI) benefits from LLM advancements, particularly in natural language interaction. ChatGPT stands out for its code generation and conversational flexibility, allowing users to intuitively interact with robots. Previous works demonstrate ChatGPT's efficacy in tasks like drone navigation and object manipulation.

This paper delves into ChatGPT's application in robot manipulation, aiming to advance practical implementation. Our framework translates environmental cues into natural language, enabling ChatGPT to generate action codes for agent training. The system empowers robots to use natural language for rational interaction, facilitating tasks like pick-and-place. We explore effective prompts, providing insights into ChatGPT's task boundaries and system stability. While acknowledging limitations and security risks, our contributions include novel frameworks and explore the capability boundaries of ChatGPT on robotic tasks.

2. Method

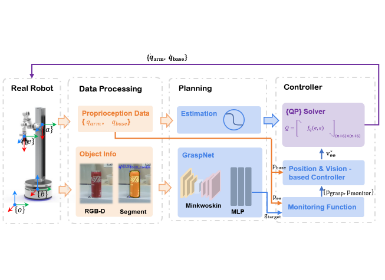

Figure 1. Architecture of our system

Figure 1 Illustrates our system architecture. ChatGPT plays three roles within it, namely decision bot, evaluation bot and corrector bot. The operator instructs for the robot to complete the task, and then a natural language prompt is generated based on environmental information and human instruction. The decision bot will generate the corresponding executable code based on the provided prompts. Next, the generated code will be executed line by line. If a runtime error occurs, the reason for this error and the line of code where the error occurred will be provided for the decision bot for modification until the code can run successfully. Then, the executable code will be tested by the Eval Code model that is generated by the evaluation bot. If the executable code can’t pass the Eval Code, the corrector bot will analyze potential reasons for the failure of results and send those failure reasons back to the decision bot for correction. Afterward, the code that satisfied the evaluation condition will be used to generate demonstration data. After training, the trained agent can deploy the real robot perfectly.

2.1 Prompting description

We propose a five-part prompting method that includes background description, object info, environment info, task info, and examples. In the background description part, the basic information about the environment is described, such as the purpose of the environment, its layout, and relevant entities. In the object info part, we list all objects' names, shapes, poses, and other helpful information, such as their properties and relationships with other objects. In the environment info part, we describe the robot and API functions ChatGPT can use to perform the task. In the task info part, we give the specific task for ChatGPT, generally to generate Python code for a given job. Finally, in the example part, we provide some examples to facilitate a better understanding of the environment and API usage. Following the suggestion by OpenAI [], we set background information and RobotAPI information as the system message in the ChatGPT API to obtain satisfactory responses.

2.2 Self-correction

In generating responses for complex tasks, ChatGPT may occasionally produce minor bugs or syntax errors that necessitate correction. This paper introduces an interactive approach for rectifying ChatGPT's responses. To employ this method, we first execute the generated code within a simulator and assess the outcomes. The generated code will be executed line by line, and when a runtime error occurs, the runtime errors, including the error message and its location, will be captured by the Code Error Catching module. This data is then sent back to the ChatGPT decision bot for further analysis. In situations where the result is a failure, the corrector bot can analyze potential reasons for the failure based on prompts and generate a response explaining why the task failed. Finally, the original ChatGPT decision bot will regenerate the code based on the corrector bot’s failure analysis. Utilizing this feedback, ChatGPT amends its response and produces accurate code. This interactive process may iterate up to three times. Our objective is to improve the precision and dependability of ChatGPT's responses, making them increasingly relevant across a range of domains.

2.3 Generated code evaluation

As Figure 1 shown we employ a ChatGPT named evaluation bot to generate evaluation code. The prompts for the evaluation bot have some differences with the decision bot. Evaluation bot generated function is_task_success() will serve as the criterion for determining the success of the entire task. The role of humans is to double-check whether the generated evaluation code is correct. If an incorrect evaluation code is generated, humans will intervene to make corrections. This kind of design can minimize the burden on humans.

2.4 Generated code evaluation

It is unreliable to rely on ChatGPT to perform general robotics tasks because the output of ChatGPT is random, which exaggerates the risk of robotic work. Although setting the temperature to zero can produce consistent outcomes at the cost of diminishing diversity and creativity, it may also lead to the continual failure of tasks. To solve this problem, we expect robots to learn robot policies to absorb ChatGPT's knowledge of solving general tasks. For robot learning, we leverage the state-of-the-art, open-source robotic manipulation benchmark and learning framework BulletArm[2] to train an agent from a ChatGPT-generated demonstration.

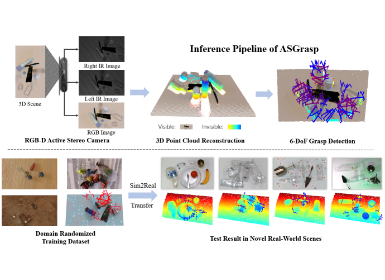

Figure 2. Robot learning network architecture

In this paper, the SDQfD [22] algorithm is adopted for the task of robot learning with Equivariant ASR network [14] as Figure 2 shown. The loss function is the sum of n-step TD loss and strict large margin loss.

3. Results

3.1 Experiment

Table 1 presents the quantitative results of the eight experiments. The fact is that despite entering the same prompts each time, the generated code and the resulting output always have significant differences because the temperature of the decision bot is 1.0. In addition, the code generated by ChatGPT contains syntax or logic errors. Although our self-correction module can revise some syntax errors, in most cases, if ChatGPT fails to generate successful code initially, it becomes difficult to achieve success in this experiment.

For ChatGPT, it is evident that as the task difficulty increases, the success rate decreases significantly. The success rates for the easy, medium, and difficult tasks are 0.88, 0.39, and 0.21, respectively. In contrast, our RobotGPT model demonstrates robustness across all levels of tasks, maintaining a good performance, which can achieve 0.915 on average in simulation. For real-world experiment, our RobotGPT can achieve 0.86, which purely use simulation data for training.

3.2 AB Test

Table1. Counting results of experiments. E: Eeay Difficulty; M: Middle; H: Hard

To investigate our LLM-driven robot's capability in solving problems that are not well-addressed by non-LLM approaches, we have introduced two open-ended experiment. The first experiment involves a tidy up room challenge that requires organizing 40 custom household objects, while the second one is a spelling word game that aims to spell the longest word using given set of alphabets A-L. Additionally, we invite human subjects to complete the same tasks. Compared with hand-coding, RobotGPT demonstrates advantages in both code quality and time consumption, which are 0.762 and 221.8 seconds compared to 0.70 and 554.9 seconds for humans. Only five participants complete all the tasks within 70 minutes, therefore even for engineers with a strong programming background, generating robot demonstration data through hand-coding is time-consuming.

4. Conclusion

In this paper, we first develop an effective prompting structure to enhance ChatGPT's understanding of the robot's environment and the tasks it needs to implement. Next, we introduce a framework called RobotGPT, which leverages ChatGPT's problem-solving capabilities to achieve more stable task execution. In experiments, we build a metric to measure task difficulty and observe that as the task difficulty increases, the success rate of execution by ChatGPT decreases.

In contrast, RobotGPT can execute these tasks with a success rate of 91.5\%, demonstrating a more stable performance. More importantly, this agent has also been deployed to run in real-world environments. Therefore, training a RobotGPT by utilizing ChatGPT as an expert is a more stable approach compared to directly using ChatGPT as a task planner. In addition, the AB test shows our LLM-driven robot outperforms hand-coding significantly on two open-ended tasks owing to the massive priori knowledge repository of LLM. Overall, the integration of robotics and LLMs is still at an infant stage. Our work is just an initial exploration, and we believe that much of the future research in this area is to explore how to properly use ChatGPT’s abilities in the field of robotics.

References

[1] S. Vemprala, R. Bonatti, A. Bucker, and A. Kapoor, “Chatgpt for robotics: Design principles and model abilities,” Microsoft Auton. Syst. Robot. Res, vol. 2, p. 20, 2023.

[2] D. Wang, C. Kohler, X. Zhu, M. Jia, and R. Platt, “Bulletarm: An open-source robotic manipulation benchmark and learning framework,” in Robotics Research, pp. 335–350, Springer, 2023