AI

A Model for Every User and Budget: Label-Free and Personalized Mixed-Precision Quantization

In this blog, we introduce our new work [1] published at the INTERPSEECH 2023 Conference.

Introduction

Research in Automatic Speech Recognition (ASR) continues to show that larger models yield better results. But while state-of-the-art networks continue to grow with billions of parameters, the difficulty of deploying these models on device also increases. One of the reasons these networks are so large is because they perform well across a wide range of accents, languages, and vocal styles; but in a distributed setting we may only need to transcribe the speech of a single user, leaving much of the network capacity unused. Furthermore, different devices offer different memory constraints and so we may be able to use a larger, more accurate network in a mobile phone, while a watch could host a more compressed version. In this work, we address these issues and ask the question: How can we compress networks to perform optimally for a small group of vocal characteristics and for a wide range of device sizes?

Our solution is a tailored compression scheme for diverse users under variable memory constraints, a problem we define as “Personalized Post-Training Model Compression.” This involves finding an optimum mixed-precision quantization setup for the memory constraint, while also preserving the performance of the model for the specific user with no additional labelled data or fine-tuning of the model.

The desiderata of our setup are as follows:

1. Models are quantized for efficient on-device inference, specifying a target model size in megabytes;

2. Models are compressed after training and no further backpropagation-based fine-tuning should be needed, since no

labels are provided for the target users and limited resources are available during the compression stage;

3. The final compressed model should work best for the users whose unlabelled data has been used during the personalized

compression stage, while still delivering reasonable performance on out-of-distribution users.

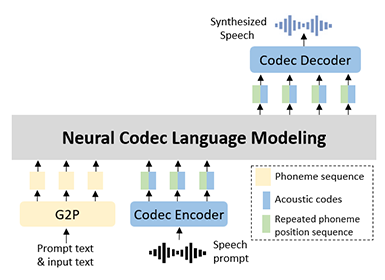

Our Method: myQASR

The proposed method (myQASR) is outlined in Figure. 1 and it is composed of three main steps as described below:

1. A Sensitivity Detection stage, where we estimate each layer's sensitivity to quantization. We estimate this by running a

forward inference pass over the limited unlabeled user-specific data and checking the distribution of the respective

activations. This step is needed to determine how many bits should be assigned to each layer of the ASR models to meet

the target budget model size;

2. The Quantization step applies the quantization to the model weights and activations with as many bits as identified at

the previous stage;

3. A Calibration stage that updates the scaling factor and the zero-point values such that the resulting quantization values

can better represent the input data distribution. We experiment with min-max [2, 3], Hessian [2] and Cosine calibration

techniques.

The main intuition behind our approach is depicted in Figure 2, where we can see how the distribution of activations from the first convolutional layer of Wav2Vec2 [4] is heavily influenced by the gender of the speaker (averaged across the whole LibriSpeech dataset [5]).

The full method is further described in Algorithm 1.

Figure 1. Overview of our method (myQASR). A large model is quantized according to users’ audio data and their device storage budget.

Figure 2. Distribution of activations from the first convolution layer of Wav2Vec2 on female (F) and male (M) data.

Experimental setup

Datasets. We employ three datasets to evaluate the performance on different experimental analyses:

1. Gender-wise Personalization. We employed the LibriSpeech (LS) [5] dataset which contains about 1000 hours of 16kHz

English speech derived from audiobooks.

2. Language-wise Personalization. We used FLEURS [6] and restricted to 10 languages.

3. Speaker-wise Personalization. We employed the Google Speech Commands (GSC) [7] dataset, which contains 1-second-

long audio files from multiple speakers.

Models. On LS, we use a pre-trained W2V2 base (B) [4] model fine-tuned on 960 hours of clean English audio data from LS.

For language-wise personalization, we employed Whisper [8]: a multi-lingual sequence to sequence (encoder/decoder) Transformer model pre-trained on 680K hours of multi-lingual supervised audio data.

For Keywords Spotting (KWS), we use a W2V2-Large-Conformer (W2V2-L-C), as described in [9], pre-trained on GSC.

Metrics. We use Word/Character Error Rate (WER/CER) for ASR tasks, and we use accuracy (ACC) for KWS. Average bit depth is denoted by  .

.

Our Results

Main Results on Gender are shown in Figure 3 where the Wav2Vec2-Base model pretrained on multi-gender data is quantized for a female user and tested on LibriSpeech-Female subset. The plot spans an increasing memory budget from 60MB to 90MB. Original (i.e., FP, non-quantized) model performance indicates the lower bound for WER (gray dashed line). Uniform quantization yields competitive results, however cannot meet fine-grained memory requirements. Our simplest myQASR calibrated on female data, myQASR (F), improves accuracy and can meet any desired target model size. We argue on the usefulness of our sensitivity method since: myQASR (F) shows significant benefits compared to myQASR (M), i.e., quantizing the model according to male data; myQASR (F) outperforms its shuffled (i.e., bitdepths shuffled) or reversed (i.e., bit-depths reversed) versions by large margin. Cosine-based calibration brings large benefits, reducing the gap from the FP model. Nonetheless, calibration on female data still outperforms calibration on male data.

Main Results on Language are shown in Figure 4 We take the pre-trained multi-lingual Whisper-L model and calibrate bit-depths and activation ranges using just 32 samples of unlabeled data. Each language label represents a tune and test split, and we show that calibrating bit depths and activations for the same language leads to improved results. Although we obtain better results on the same language used for calibration (on-diagonal results), we remark that the resulting model still achieves competitive results on other languages (off-diagonal results); thus, being able to predict also on such languages. In the worst case (i.e., Russian), our method is outperformed by calibration on other languages. However, it shows a relative gain of 0.9% compared to the average of other-language results. In the best case (i.e., Catalan), our method outperforms the average of other languages by 10.9% relative gain. On average, our same-language myQASR yields 66.2% better results than standard uniform quantization with no calibration (12.5% vs. 36.9% WER), and 4.2% better results than other-language quantization (13.0% WER).

Figure 3. WER of Wav2Vec2-Base on LibriSpeech-Female. Original model size is 360MB.

Figure 4. WER of FLEUR with myQASR-Whisper-Large.

Main Results on Speaker are shown in Figure 5 We partition GSC by speaker ID and evaluate each ID with calibration data from different speakers. We show that, when sensitivity and calibration analysis is performed on the same speaker, we achieve optimum performance. For example, in the best case (i.e., speaker #7), we achieve 100% ACC when compression is personalized for that speaker, compared with 40% ACC when personalized for another speaker from the same dataset, even though keywords are the same. On average, our same-speaker myQASR yields 17.5% higher results than standard uniform quantization with no calibration (92.3% vs. 78.6% ACC), and 19.6% higher results than other-speaker quantization (77.2% ACC).

Figure 5. Accuracy on GSC-V2 with myQASR-Wav2Vec2-Large-Conformer.

Several ablation studies are available on our full paper, where we show that:

• The standard method of selecting min-max values by linear interpolation mapping the highest (lowest) activation value to

the min (max) bit depth achieves significantly lower performance than our approach.

• Our sensitivity approach outperforms other distance-based (e.g., L1, L2, Frobenius, etc) and reduction-based (e.g., average,

max, etc) methods.

• Calibration via Cosine or Hessian methods provide the highest results at the price of an increased calibration time.

• The number of unlabelled samples needed to personalize the ASR model are in the range 10-100 samples.

Conclusions

We proposed a new task: personalized compression system where only unlabelled samples of target users are needed to tailor the performance of the compressed model to the target users.

We proposed a new method: myQASR, a post-training backpropagation-free mixed-precision quantization scheme that estimates the sensitivity of each layer to quantization and uses it as a proxy to determine the bit depths to assign to each of them to meet any memory budget constraints.

myQASR features:

• Uniformity constraint to evaluate layer sensitivity,

• (optional) Hessian/Cosine guidance to set quantization scaling parameters,

• A few user-specific unlabelled samples to drive the quantization process,

• PTQ: personalizing the model performance with no fine-tuning.

Link to the paper

Our full paper is available at: https://arxiv.org/abs/2307.12659 and the codebase is open source at https://github.com/SamsungLabs/myQASR.

References

[1] E. Fish, U. Michieli and M. Ozay, "A Model for Every User and Budget: Label-Free and Personalized Mixed-Precision Quantization," in INTERSPEECH, 2023.

[2] Z. Dong, Z. Yao, A. Gholami, M. W. Mahoney and K. Keutzer, "Hawq: Hessian aware quantization of neural networks with mixed-precision," in ICCV 2019.

[3] Y. Cai, Z. Yao, Z. Dong, A. Gholami, M. W. Mahoney and K. Keutzer, "Zeroq: A novel zero shot quantization framework," in CVPR 2020.

[4] A. Baevski, Y. Zhou, A. Mohamed and M. Auli, "wav2vec 2.0: A framework for self-supervised learning of speech representations," in NeurIPS 2020.

[5] V. Panayotov, G. Chen, D. Povey and S. Khudanpur, "LibriSpeech: an asr corpus based on public domain audio books," in ICASSP 2015.

[6] A. Conneau, M. Ma, S. Khanuja, Y. Zhang, V. Axelrod, S. Dalmia, J. Riesa, C. Rivera and A. Bapna, "Fleurs: Few-shot learning evaluation of universal representations of speech," in SLT 2022.

[7] P. Warden, "Speech commands: A dataset for limited-vocabulary speech recognition," in arXiv:1804.03209 (2018).

[8] A. Radford, J. W. Kim, T. Xu, G. Brockman, C. McLeavey and I. Sutskever, "Robust speech recognition via large-scale weak supervision," in ICML 2023.

[9] C. Wang, Y. Tang, X. Ma, A. Wu, S. Popuri, D. Okhonko and J. Pino, "Fairseq S2T: Fast speech-to-text modeling with fairseq," in arXiv:2010.05171.